本文分享Profile分析(非pipeline版本)

Profile分析及优化指南

背景

我们时常遇到sql执行时间不及预期的情况,为了优化sql达到预期查询时延,我们能够做哪些优化。本文旨在分析查询profile各阶段耗时是否合理以及对应优化方式。

准备

打开profile分析上报。

使用mysqlclient连接starrocks集群,

mysql -h ip -P9030 -u root -p xxx

然后输入

##该参数开启的是session变量,若想开启全局变量可以set global is_report_success=true;一般不建议全局开启,会略微影响查询性能

mysql> set is_report_success=true;

该参数会打开profile上报,后续可以查看sql对应的profile,从而分析sql瓶颈在哪。如何进一步优化。

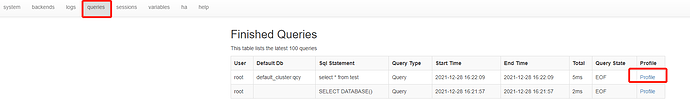

如何获取profile?

如上设置打开profile上报后,打开fe的http界面(http://ip:8030),如下点击queries后,点击相应sql后的profile即可查看对应信息。

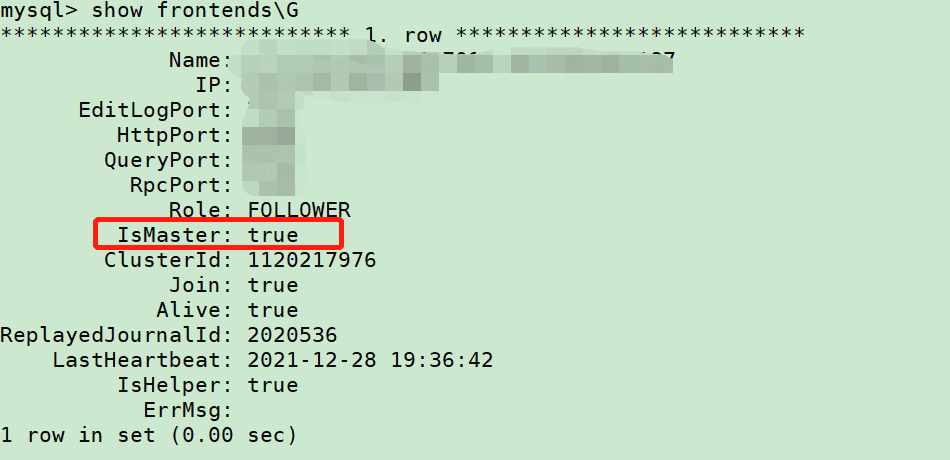

注:此处需要进master的http页面。如不确定集群哪台是master,可以show frontends查看IsMaster值为true的ip

explain分析

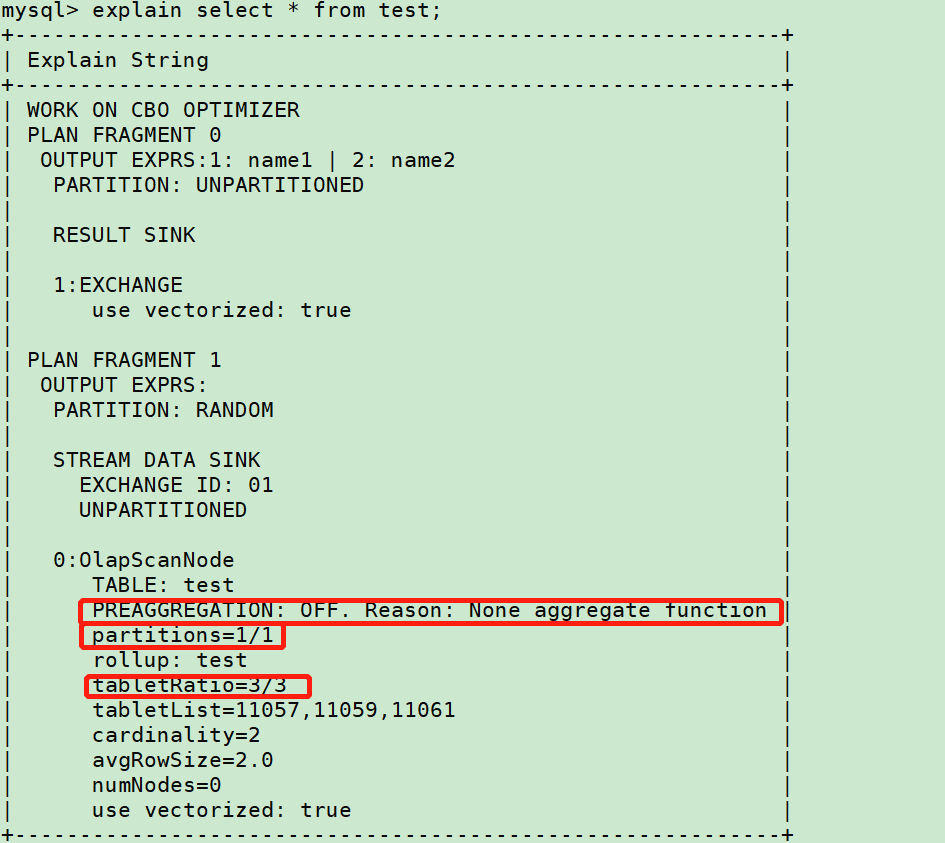

Explain sql获取执行计划,如下

分区分桶

上图中

partitions字段x/xx表示 查询分区/总分区

tabletRatio字段x/xx表示 查询分桶/总分桶

查看对应查询sql是否包含分区字段,是否正确裁剪。如未正常裁剪,确认是否有以下问题:

- 字段类型不一致

- 字段有函数 eg:

date_format('2009-10-04 22:23:00', '%W %M %Y')

存储层聚合

何时需要存储层聚合?

- 聚合表的聚合发⽣在导⼊

- Compaction

- 查询时

PREAGGREGATION 是On 表⽰存储层可以直接返回数据, 存储层⽆需进⾏聚合

PREAGGREGATION 是 OFF 表⽰存储层必须聚合, 可以关注下 OFF的原因是否符合预期

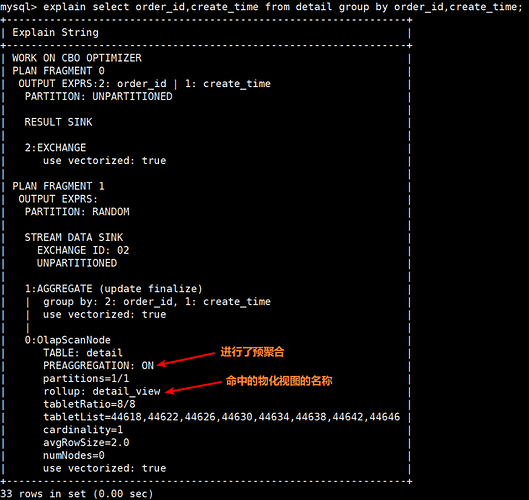

是否命中物化视图

通过执行计划可以看到命中的物化视图的名称以及是否进行了预聚合

SCAN环节分析及优化

再porifle中搜索OLAP_SCAN_NODE,会有很多个结果形如OLAP_SCAN_NODE (id=0),其中id=x有多个,表示同一个表的scan信息。如下是一个典型的scan慢节点。

OLAP_SCAN_NODE (id=0):(Active: 56s208ms[56208256470ns], % non-child: 0.00%)

- Table: xxxx

- Rollup: xxxx

- Predicates: 3: svrIp = 'xxx.xxx.xxx.xxx'

- BytesRead: 1.21 GB

- NumDiskAccess: 0

- PeakMemoryUsage: 1.24 MB

- PerReadThreadRawHdfsThroughput: 0.0 /sec

- RowsRead: 0

- RowsReturned: 96

- RowsReturnedRate: 1

- ScanTime: 56s206ms

- ScannerThreadsInvoluntaryContextSwitches: 0

- ScannerThreadsTotalWallClockTime: 0ns

- MaterializeTupleTime(*): 0ns

- ScannerThreadsSysTime: 0ns

- ScannerThreadsUserTime: 0ns

- ScannerThreadsVoluntaryContextSwitches: 0

- TabletCount : 1

- TotalRawReadTime(*): 0ns

- TotalReadThroughput: 0.0 /sec

MERGE:

- aggr: 56s206ms

- union: 56s203ms

SCAN:(Active: 56s203ms[56203074918ns], % non-child: 45.19%)

- CachedPagesNum: 0

- CompressedBytesRead: 2.05 GB

- CreateSegmentIter: 435.687us

- DictDecode: 3.149ms

- IOTime: 48s384ms

- LateMaterialize: 42s792ms

- PushdownPredicates: 1

- RawRowsRead: 459.082236M (459082236)

- SegmentInit: 661.218ms

- BitmapIndexFilter: 0ns

- BitmapIndexFilterRows: 0

- BloomFilterFilterRows: 0

- ShortKeyFilterRows: 0

- ZoneMapIndexFilterRows: 19.611648M (19611648)

- SegmentRead: 12s743ms

- BlockFetch: 11s190ms

- BlockFetchCount: 694.015K (694015)

- BlockSeek: 829.477ms

- BlockSeekCount: 1.058K (1058)

- ChunkCopy: 124.666ms

- DecompressT: 40.773ms

- DelVecFilterRows: 0

- IndexLoad: 0ns

- PredFilter: 161.341ms

- PredFilterRows: 458.902727M (458902727)

- TotalPagesNum: 146.41K (146410)

- UncompressedBytesRead: 2.08 GB

其中table=x可以看到对应的所扫描的表信息。

数据倾斜问题

查询某张表的scan信息,比如上述test表对应的OLAP_SCAN_NODE (id=0),分别检索查看多个Active: xxxms信息,观察是否差距很大,如果存在个别节点耗时是其他节点数据量倍数,

例如:

OLAP_SCAN_NODE (id=0):(Active: 4m50s[290851091923ns]

OLAP_SCAN_NODE (id=0):(Active: 250.644ms[250644279ns]

OLAP_SCAN_NODE (id=0):(Active: 131.874ms[131874236ns]

OLAP_SCAN_NODE (id=0):(Active: 160.832ms[160832221ns]

…

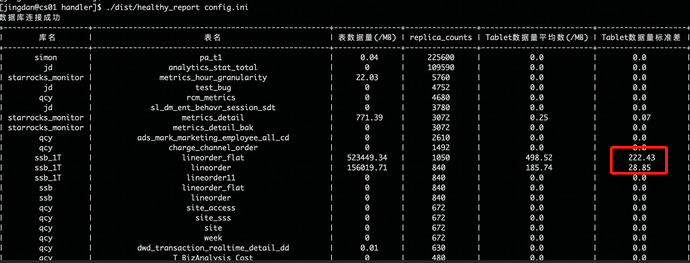

则有数据倾斜的问题。可以运行一下tabelt分析工具。

https://starrocks-public.oss-cn-zhangjiakou.aliyuncs.com/tools.tar.gz

下载完成后编辑config.ini信息,然后执行

./healthy_report config.ini

暂时无法在文档外展示此内容

可以获取以下信息:

以上信息中关注下标准差那列,如果异常高,则表示该表需要重新选取hash键,建表不合理有严重的数据倾斜问题。

除此还可以关注下tablet数据平均值是否合理,首先预估每个分区的数据量,然后按照每 1GB -10 GB 原始数据一个 tablet 计算,从而确定分桶数量。具体可参考 确定分桶数量

关键指标解读

以下对scan的关键指标做一些解读,如果对应字段的值占比总查询时间很高,可以针对该阶段进行分析。

- BytesRead

读取tablet数据量大小,该值太大或者太小表示tablet设置的均不合理,可以参考视频内容,简单说明了分桶数的作用以及使用注意事项:

https://www.bilibili.com/video/BV1SX4y1c7i4?p=2

- RowsReturned

扫描返回符合要求的行数,如果BytesRead很大,而RowsReturned很小。但是scan占比挺久,可以考虑将过滤条件中的字段建表时设置为key列,对点查效果有很好的加速效果,关于排序列的使用可以参考以下内容:

https://www.bilibili.com/video/BV1SX4y1c7i4?p=1

- RowsReturnedRate

结果集返回速率,如果有个别节点返回比较慢,可以查看磁盘读写是否异常,或者cpu,内存资源是否负载很高,导致系统调度时间增加,该问题常见% non-child: 98.1%占比很大。

- TabletCount

tablet数量,关注此处是否太多或者太少,此处和bucket设置息息相关,一般按照数据量去划分bucket数量,同BytesRead综合分析是否需要修改bucket数量

- MERGE

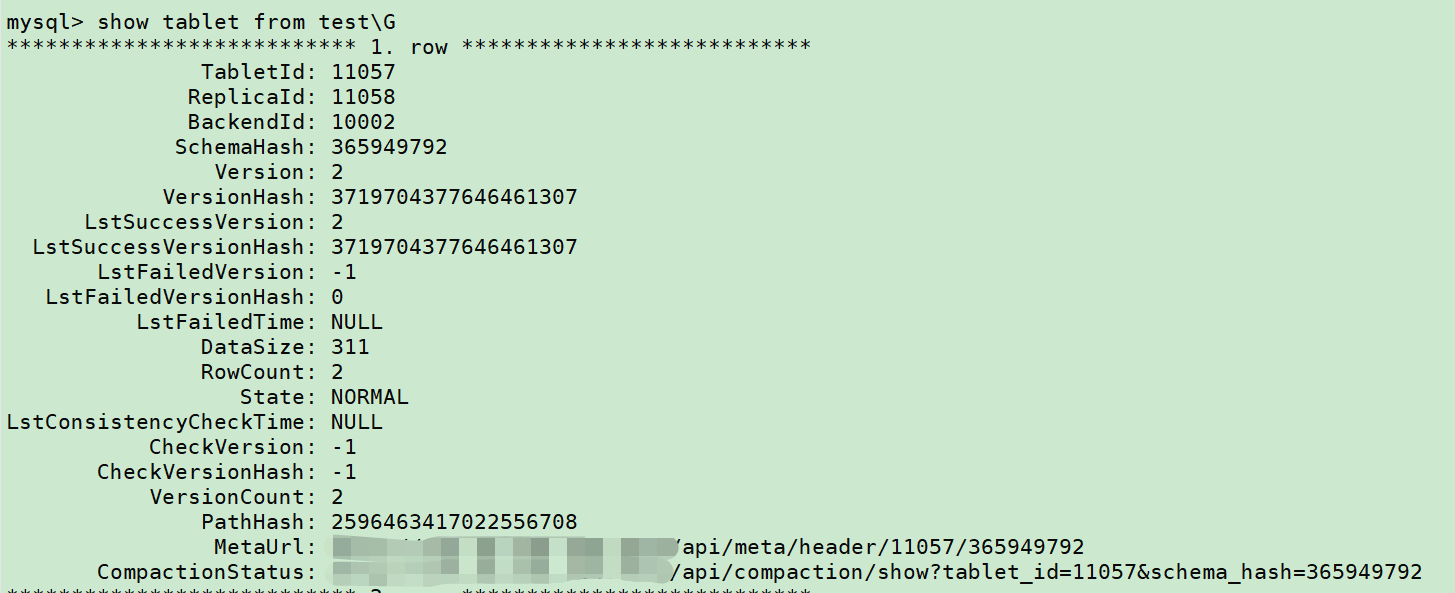

如果merge中的aggr/union/sort耗时特别久,则整体瓶颈在底层rowset的merge上,该问题常见于unique表和aggregate表,在于内部存在多个rowset没有合并,导致查询时内部需要做相应操作,从而影响结果集输出。可以通过以下步骤去排查rowset是否太多:

Show tablet from table\G

找到version比较大的tablet,此处举例取11057,接着

Show tablet 11057\G

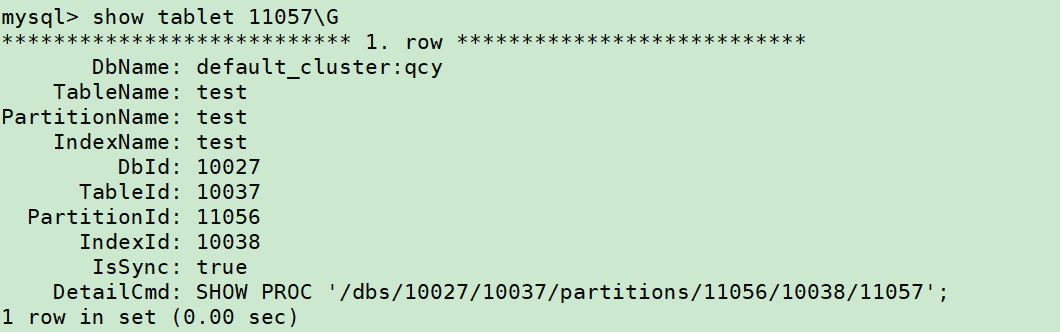

可以用 「DetailCmd」的命令,来进一步展示详细信息:

SHOW PROC '/dbs/10027/10037/partitions/11056/10038/11057'\G

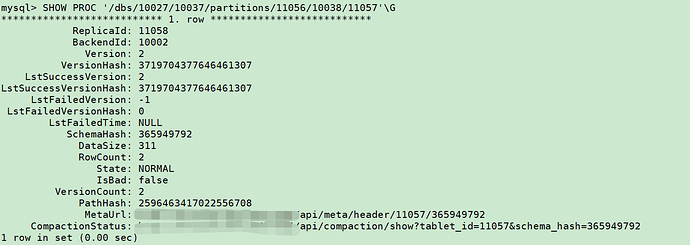

访问「CompactionStatus」中的 URL,会展示分片的几个副本(replica)的具体信息,包括可以查看每个副本更多元数据信息。

会出现如下信息:

{

"cumulative point": 40716,

"last cumulative failure time": "2021-05-28 14:28:07.695",

"last base failure time": "1970-01-01 08:00:00.000",

"last cumulative success time": "2021-05-27 18:30:59.385",

"last base success time": "2021-05-27 18:30:59.385",

"rowsets": [

"[0-40603] 1 DATA NONOVERLAPPING",

"[40604-40635] 0 DATA NONOVERLAPPING",

"[40636-40674] 1 DATA NONOVERLAPPING",

"[40675-40715] 0 DATA NONOVERLAPPING"

],

"stale version path": []

}

此处有4个rowset说明数据还没充分做compaction。(其中 NONOVERLAPPING 只是表示一个 rowset 内部,如果有多个 segment 时,是否有重叠部分)。

可以通过修改 BE 的 conf/be.conf 中的配置,加快 compaction,以减少版本数:

注:当磁盘配置为10+块时候,建议降低导入频率,不要采取以下措施,可能会导致compaction占用cpu比较多

# 加快做 cumulative compaction 的检查,能减少版本数(rowset 的数量)

cumulative_compaction_check_interval_seconds = 2

cumulative_compaction_num_threads_per_disk = 2

base_compaction_num_threads_per_disk = 2

如果想要进一步合并 2 个 rowset 为 1 个 rowset,可以做如下配置,但一般不建议,因为会导致增加大量 base compaction。

# Cumulative文件数目要达到设定数值,就进行 base compaction

base_compaction_num_cumulative_deltas = 1

注:聚合和更新表key列过多时,会极大影响merge时间,可以根据业务合适选取key列,如果必要的key列实在很多,可以考虑以下几种方案:

- 聚合表可以考虑用明细表+物化视图替代

- 更新表如果符合主键模型的场景,可以使用主键模型替代,详情参考文档主键模型使用部分内容:https://docs.starrocks.com/zh-cn/main/table_design/Data_model#主键模型

- IOTime

磁盘io所用时间,和上述MERGE和RowsReturnedRate有关,排查见如上所示。

- PushdownPredicates

下推到存储层的谓词

- RawRowsRead

读取行数

- BitmapIndexFilter/BitmapIndexFilterRows/BloomFilterFilterRows

被索引过滤的行数

- PredFilter

谓词过滤的时间

- PredFilterRows

谓词过滤的行数

注:如果有谓词下推异常或者分区分桶裁剪不正常的情况可以检查是否包含以下问题:

- 字段类型不一致

- 过滤条件中左边字段有函数 eg:

date_format('2009-10-04 22:23:00', '%W %M %Y')

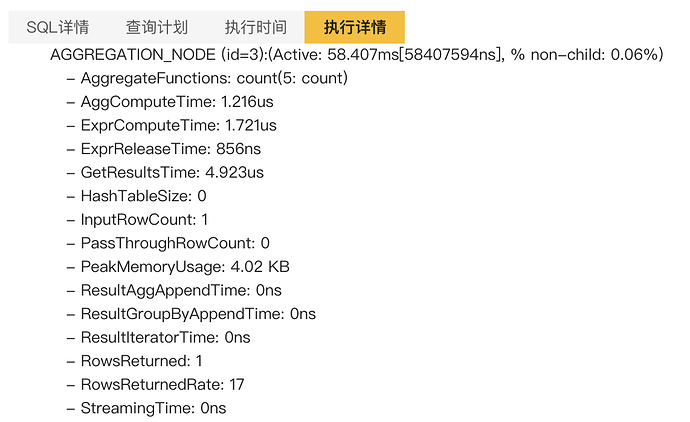

Aggregate环节分析及优化

关键指标解读

- AggComputeTime

构建 Hash 表和计算 聚合函数的时间

- ExprComputeTime: 1.721us

计算聚合函数内部标量函数的时间

- ExprReleaseTime: 856ns

内存释放的时间

- GetResultsTime: 4.923us

将Hash表的数据转换成Chunk的时间

- HashTableSize: 0

hash表大小

- InputRowCount: 1

聚合前行数

- PassThroughRowCount: 0

Streaming 聚合时,没有经过 Hash 表,直接输出的行数

- PeakMemoryUsage: 4.02 KB

内存使用

- StreamingTime: 0ns

Streaming 聚合时,聚合函数列格式转换的耗时

JOIN环节分析及优化

名词解释

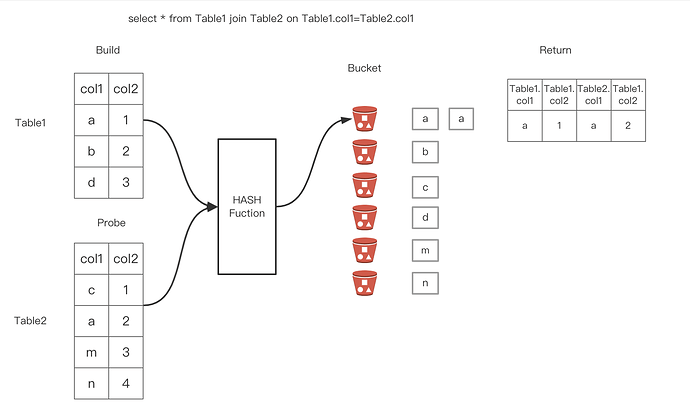

关于HashJoin :两个阶段,Build+Probe

Build阶段 :将其中一个表(一般是较小的那个)中的每一个条经过 Hash 函数的计算都放到不同的Hash Bucket中。

Probe阶段 :对于另外一个表,经过Hash函数,确定其所在的Hash Bucket,然后和上一步构建的Hash Bucket中的每一行进行匹配,如果匹配到就返回对应的行。

如下图所示两个表经过Hash join之后应该返回的分别是第1和第2行。

Join 左右表调整 : StarRocks 是用右表构建 Hash 表,所以右表应该是小表,StarRocks 可以基于 cost 自动调整左右表顺序,也会自动把 left join 转 right join。

Join 多表 Reorder :多表Join 如何选择出正确的Join 顺序,是 CBO 优化器的核心,当 Join 表的数量小于等于5时,StarRocks 会基于 Join 交换律和结合律进行 Join Reorder,大于5时,StarRocks 会基于贪心算法和动态规划进行 Join Reorder。

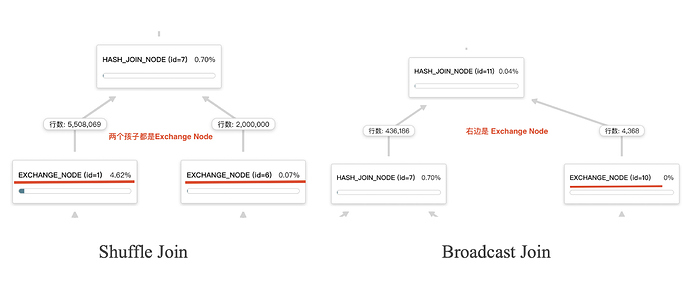

Join分布式执行选择 :

- BroadCast Join:将右表全量发送到左表的HashJoinNode

- Shuffle Join:将左右表的数据根据哈希计算分散到集群的节点之中

- Colocate Join:两个表的数据分布都是一样的,只需要本地join即可,没有网络传输开销。

- Bucket Shuffle Join:join的列是左表的数据分布列(分桶键),所以相比于shuffle join只需要将右表的数据发送到左表数据存储计算节点。

- Replicated Join:右表的全量数据是分布在每个节点上的(也就是副本个数和BE节点数量一致),不管左表怎么分布,都是走本地Join。没有网络传输开销。

RunetimeFilter: 基本原理是通过在join操作之前提前过滤掉那些不会命中join的输入数据来大幅减少join中的数据传输和计算,从而减少整体的执行时间。

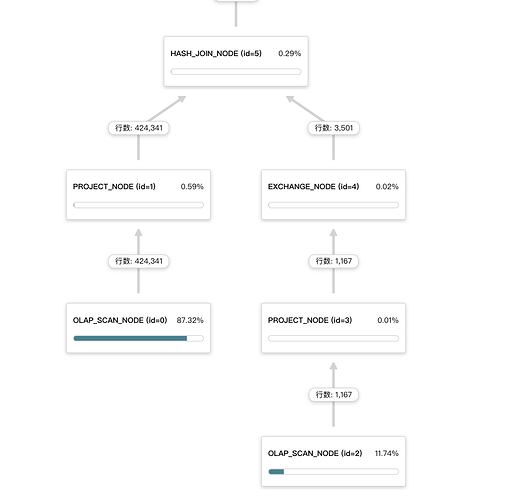

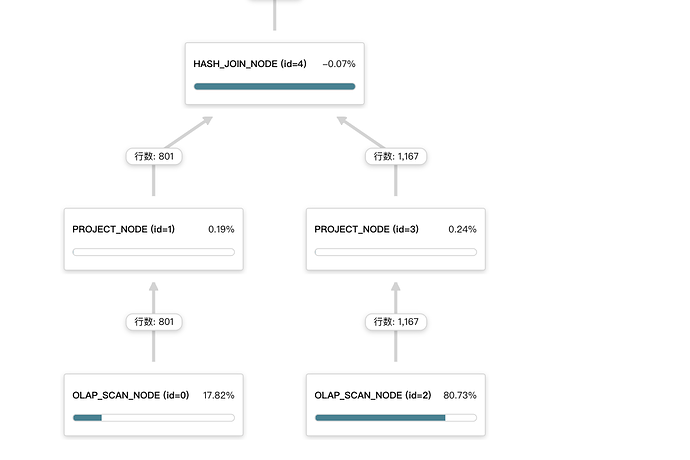

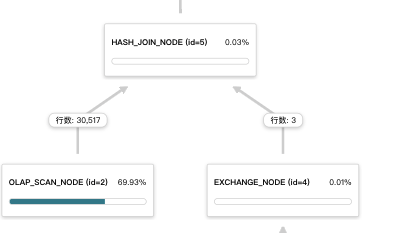

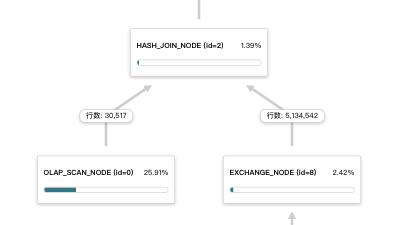

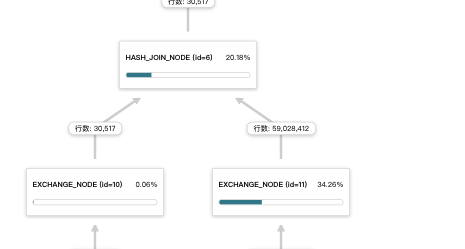

注:下图是企业版profile可视化界面

Join节点的Profile

HASH_JOIN_NODE (id=4):(Active: 26s215ms[26215554772ns], % non-child: 0.13%)

- JoinPredicates: `lo_custkey` = `c_custkey`

- JoinType: InnerJoin

- AvgInputProbeChunkSize: 3.622K (3622)

- AvgOutputChunkSize: 981

- BuildBuckets: 1.048576M (1048576)

- BuildRows: 599.689K (599689)

- BuildTime: 28.98ms

- 1-CopyRightTableChunkTime: 15.276ms

- 2-BuildHashTableTime: 8.933ms

- 3-BuildPushDownExprTime: 3.322ms

- 4-BuildConjunctEvaluateTime: 1.456us

- PeakMemoryUsage: 14.87 MB

- ProbeRows: 50.708K (50708)

- ProbeTime: 6.212ms

- 1-MergeInputChunkTimer: 1.12us

- 2-SearchHashTableTimer: 3.898ms

- 3-OutputBuildColumnTimer: 1.924ms

- 4-OutputProbeColumnTimer: 311.679us

- 5-OutputTupleColumnTimer: 5.539us

- 6-ProbeConjunctEvaluateTime: 14.437us

- 7-OtherJoinConjunctEvaluateTime: 0ns

- 8-WhereConjunctEvaluateTime: 0ns

- PushDownExprNum: 1

- RowsReturned: 12.764K (12764)

- RowsReturnedRate: 486

当满足RuntimeFilter的条件时,会触发Runtime Filter下推到左表,达到提前过滤。可以关注下左表 OLAP_SCAN_NODE 节点是否有“JoinRuntimeFilter”关键字

OLAP_SCAN_NODE (id=0):(Active: 1s71ms[1071417120ns], % non-child: 0.00%)

- Table: table_1

- Rollup: table_1

- BytesRead: 1.13 GB

- JoinRuntimeFilterEvaluate: 1

- JoinRuntimeFilterInputRows: 11.536K (11536)

- JoinRuntimeFilterOutputRows: 11.536K (11536)

- JoinRuntimeFilterTime: 41.126us

- NumDiskAccess: 0

- PeakMemoryUsage: 3.57 MB

- PerReadThreadRawHdfsThroughput: 0.0 /sec

- RowsRead: 0

- RowsReturned: 11.536K (11536)

- RowsReturnedRate: 10.767K /sec

- ScanTime: 2s988ms

- ScannerThreadsInvoluntaryContextSwitches: 0

- ScannerThreadsTotalWallClockTime: 0ns

- MaterializeTupleTime(*): 0ns

- ScannerThreadsSysTime: 0ns

- ScannerThreadsUserTime: 0ns

- ScannerThreadsVoluntaryContextSwitches: 0

- TabletCount : 3

- TotalRawReadTime(*): 0ns

- TotalReadThroughput: 0.0 /sec

执行计划:explain + sql

HASH JOIN |

join op: INNER JOIN (BROADCAST) |

hash predicates: |

colocate: false, reason: |

equal join conjunct: 3: lo_custkey = 35: c_custkey |

use vectorized: true

分析和优化

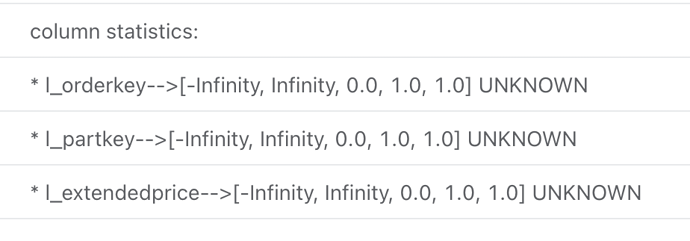

是否有收集统计信息?

1.19.0及其之后的版本默认开启了cbo,之前的版本如果没有开启cbo,可能就会没有统计信息

如果下面的sql有查询结果,表示有统计信息收集(table_name为参与join的表名)。如果没有查询结果,可参考analyze命令手动触发统计信息收集

select * from _statistics_.table_statistic_v1 where table_name like '%table_name';

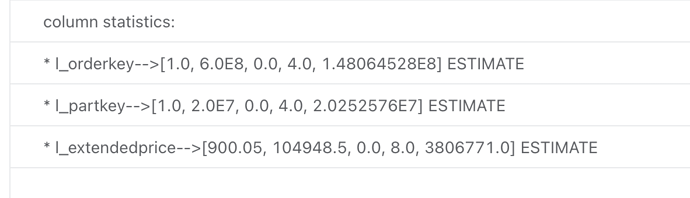

explain costs + sql

不合理:各个列的数据是默认值0.0,1.0等

合理:有统计信息输出

怎么判断瓶颈点?

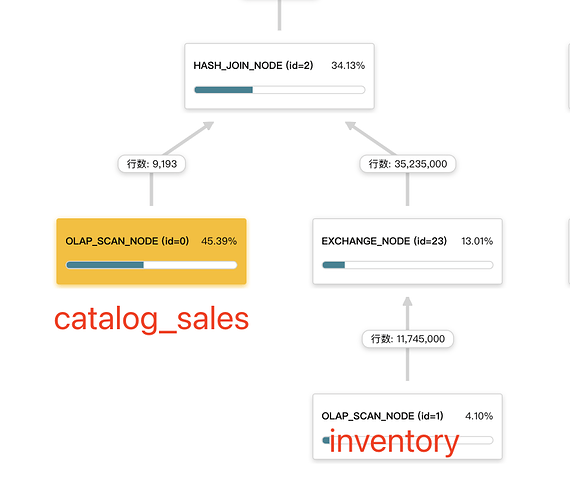

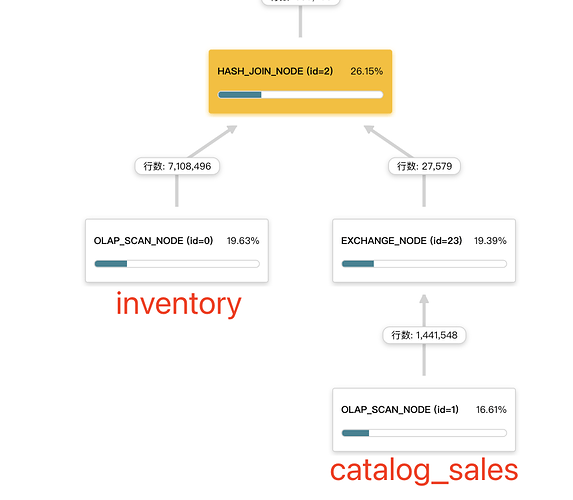

下图表示的是合理的方式,右表是小表,采用的broadcast join方式。

下图表示的是不合理的方式,右表是大表,采用的broadcast join方式,会将右表的数据拷贝 BE 数量* parallel_fragment_exec_instance_num (并行度)份,导致 JOIN 节点的右子节点的 EXCHANGE 节点花费很多的执行时间。

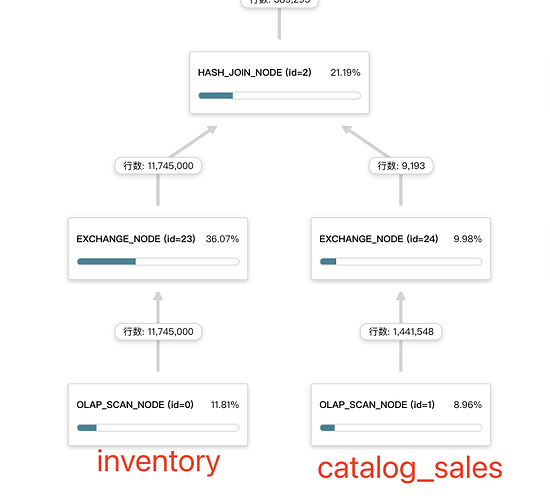

下图表示的是不合理的方式,两个表数据量相差比较大,现在采用的是shuffle join(两个孩子节点都是EXCHANGE NODE),这种情况下建议可以尝试采用broadcast join,调整下左右表顺序,小表在左边。例如加hint方式:

select 右表.x,左表.y from 右表 join [broadcast] 左表 on 左表.x1 = 右表.x1

常见优化方法

当前启用了CBO优化器,一般情况下不需要人为触发优化,不过在一些场景下可以采用下面的方法尝试优化下:

- join condition 的列,更应该使用 int、DATE 等简单类型

- 在join之前尽量添加一些where条件,能够充分发挥谓词下推,减少后续的数据shuffle和join节点处理的数据量

- 大表join,能够使用colocate join的尽量使用,能够减少网络传输,极大的提升性能,具体请参考Colocate Join

- 大小表join,左右表顺序有问题,可以通过[broadcast] hint方式调整小表为右表方式。例如:select a.x,b.y from a join [broadcast] b on a.x1 = b.x1

- 两个相差不多的表(一般几百k行)join,有些情况下默认会选用broadcast join,这个时候可以尝试采用[shuffle] hint的方式强制走shuffle join。例如:select a.x,b.y from a join [shuffle] b on a.x1 = b.x1

案例

调整左右表

下面以tpcds 1GB数据集构建的sql来分享下通过调整左右表顺序来达到提升查询效率的目的

原sql:1秒831毫秒

select i_item_desc

,w_warehouse_name

,d1.d_week_seq

,sum(case when p_promo_sk is null then 1 else 0 end) no_promo

,sum(case when p_promo_sk is not null then 1 else 0 end) promo

,count(*) total_cntfrom catalog_salesjoin inventory on (cs_item_sk = inv_item_sk)join warehouse on (w_warehouse_sk=inv_warehouse_sk)join item on (i_item_sk = cs_item_sk)join customer_demographics on (cs_bill_cdemo_sk = cd_demo_sk)join household_demographics on (cs_bill_hdemo_sk = hd_demo_sk)join date_dim d1 on (cs_sold_date_sk = d1.d_date_sk)join date_dim d2 on (inv_date_sk = d2.d_date_sk)join date_dim d3 on (cs_ship_date_sk = d3.d_date_sk)left outer join promotion on (cs_promo_sk=p_promo_sk)left outer join catalog_returns on (cr_item_sk = cs_item_sk and cr_order_number = cs_order_number)where d1.d_week_seq = d2.d_week_seq

and inv_quantity_on_hand < cs_quantity

and d3.d_date > d1.d_date + 5

and hd_buy_potential = '>10000'

and d1.d_year = 1999

and cd_marital_status = 'D'

group by i_item_desc,w_warehouse_name,d1.d_week_seqorder by total_cnt desc, i_item_desc, w_warehouse_name, d_week_seqlimit 100

通过分析profile看到主要耗时在catalog_sales和inventory表的join耗时,可以明显看出catalog_sales是小表,应该在右边,但是现在在左边,导致inventory表被broadcast了。

我们调整catalog_sales表被broadcast的效果,耗时降到了 383ms

select i_item_desc

,w_warehouse_name

,d1.d_week_seq

,sum(case when p_promo_sk is null then 1 else 0 end) no_promo

,sum(case when p_promo_sk is not null then 1 else 0 end) promo

,count(*) total_cnt

from inventory

join [broadcast] catalog_sales on (cs_item_sk = inv_item_sk)

join warehouse on (w_warehouse_sk=inv_warehouse_sk)

join item on (i_item_sk = cs_item_sk)

join customer_demographics on (cs_bill_cdemo_sk = cd_demo_sk)

join household_demographics on (cs_bill_hdemo_sk = hd_demo_sk)

join date_dim d1 on (cs_sold_date_sk = d1.d_date_sk)

join date_dim d2 on (inv_date_sk = d2.d_date_sk)

join date_dim d3 on (cs_ship_date_sk = d3.d_date_sk)

left outer join promotion on (cs_promo_sk=p_promo_sk)

left outer join catalog_returns on (cr_item_sk = cs_item_sk and cr_order_number = cs_order_number)

where d1.d_week_seq = d2.d_week_seq

and inv_quantity_on_hand < cs_quantity

and d3.d_date > d1.d_date + 5

and hd_buy_potential = '>10000'

and d1.d_year = 1999

and cd_marital_status = 'D'

group by i_item_desc,w_warehouse_name,d1.d_week_seq

order by total_cnt desc, i_item_desc, w_warehouse_name, d_week_seq

limit 100

调整Join方式:broadcast?shuffle?

还是上面的例子,我们通过调整broadcast join 为shuffle join,时间降到了 408ms

select i_item_desc

,w_warehouse_name

,d1.d_week_seq

,sum(case when p_promo_sk is null then 1 else 0 end) no_promo

,sum(case when p_promo_sk is not null then 1 else 0 end) promo

,count(*) total_cnt

from inventory

join [shuffle] catalog_sales on (cs_item_sk = inv_item_sk)

join warehouse on (w_warehouse_sk=inv_warehouse_sk)

join item on (i_item_sk = cs_item_sk)

join customer_demographics on (cs_bill_cdemo_sk = cd_demo_sk)

join household_demographics on (cs_bill_hdemo_sk = hd_demo_sk)

join date_dim d1 on (cs_sold_date_sk = d1.d_date_sk)

join date_dim d2 on (inv_date_sk = d2.d_date_sk)

join date_dim d3 on (cs_ship_date_sk = d3.d_date_sk)

left outer join promotion on (cs_promo_sk=p_promo_sk)

left outer join catalog_returns on (cr_item_sk = cs_item_sk and cr_order_number = cs_order_number)

where d1.d_week_seq = d2.d_week_seq

and inv_quantity_on_hand < cs_quantity

and d3.d_date > d1.d_date + 5

and hd_buy_potential = '>10000'

and d1.d_year = 1999

and cd_marital_status = 'D'

group by i_item_desc,w_warehouse_name,d1.d_week_seq

order by total_cnt desc, i_item_desc, w_warehouse_name, d_week_seq

limit 100

Colocate join减少网络传输

下面以tpch 50GB数据集构建的sql来分享下通过调整左右表顺序来达到提升查询效率的目的

默认查询执行方式选择的broadcast join,耗时440ms

原建表

CREATE TABLE `lineitem` (

`l_shipdate` date NULL COMMENT "",

`l_orderkey` int(11) NULL COMMENT "",

`l_linenumber` int(11) NULL COMMENT "",

`l_partkey` int(11) NULL COMMENT "",

`l_suppkey` int(11) NULL COMMENT "",

`l_quantity` decimal64(15, 2) NULL COMMENT "",

`l_extendedprice` decimal64(15, 2) NULL COMMENT "",

`l_discount` decimal64(15, 2) NULL COMMENT "",

`l_tax` decimal64(15, 2) NULL COMMENT "",

`l_returnflag` varchar(1) NULL COMMENT "",

`l_linestatus` varchar(1) NULL COMMENT "",

`l_commitdate` date NULL COMMENT "",

`l_receiptdate` date NULL COMMENT "",

`l_shipinstruct` varchar(25) NULL COMMENT "",

`l_shipmode` varchar(10) NULL COMMENT "",

`l_comment` varchar(44) NULL COMMENT ""

) ENGINE=OLAP

DUPLICATE KEY(`l_shipdate`, `l_orderkey`)

COMMENT "OLAP"

DISTRIBUTED BY HASH(`l_orderkey`) BUCKETS 96

PROPERTIES (

"replication_num" = "3",

"in_memory" = "false",

"storage_format" = "DEFAULT"

);

CREATE TABLE `orders` (

`o_orderkey` int(11) NULL COMMENT "",

`o_orderdate` date NULL COMMENT "",

`o_custkey` int(11) NULL COMMENT "",

`o_orderstatus` varchar(1) NULL COMMENT "",

`o_totalprice` decimal64(15, 2) NULL COMMENT "",

`o_orderpriority` varchar(15) NULL COMMENT "",

`o_clerk` varchar(15) NULL COMMENT "",

`o_shippriority` int(11) NULL COMMENT "",

`o_comment` varchar(79) NULL COMMENT ""

) ENGINE=OLAP

DUPLICATE KEY(`o_orderkey`, `o_orderdate`)

COMMENT "OLAP"

DISTRIBUTED BY HASH(`o_orderkey`) BUCKETS 96

PROPERTIES (

"replication_num" = "3",

"in_memory" = "false",

"storage_format" = "DEFAULT"

);

select o_orderpriority, count(*) as order_count

from lineitem

join orders on l_orderkey = o_orderkey

where

o_orderdate >= date '1993-07-01'

and o_orderdate < date '1993-07-01' + interval '3' month

and l_commitdate < l_receiptdate

group by o_orderpriority

order by o_orderpriority;

lineitem和orders表建立colocate属性,没有网络shuffle耗时,sql耗时降到了60ms

优化后建表

CREATE TABLE `lineitem_colocate` (

`l_shipdate` date NULL COMMENT "",

`l_orderkey` int(11) NULL COMMENT "",

`l_linenumber` int(11) NULL COMMENT "",

`l_partkey` int(11) NULL COMMENT "",

`l_suppkey` int(11) NULL COMMENT "",

`l_quantity` decimal64(15, 2) NULL COMMENT "",

`l_extendedprice` decimal64(15, 2) NULL COMMENT "",

`l_discount` decimal64(15, 2) NULL COMMENT "",

`l_tax` decimal64(15, 2) NULL COMMENT "",

`l_returnflag` varchar(1) NULL COMMENT "",

`l_linestatus` varchar(1) NULL COMMENT "",

`l_commitdate` date NULL COMMENT "",

`l_receiptdate` date NULL COMMENT "",

`l_shipinstruct` varchar(25) NULL COMMENT "",

`l_shipmode` varchar(10) NULL COMMENT "",

`l_comment` varchar(44) NULL COMMENT ""

) ENGINE=OLAP

DUPLICATE KEY(`l_shipdate`, `l_orderkey`)

COMMENT "OLAP"

DISTRIBUTED BY HASH(`l_orderkey`) BUCKETS 96

PROPERTIES (

"replication_num" = "3",

"in_memory" = "false",

"colocate_with" = "group_tpch_50",

"storage_format" = "DEFAULT"

);

CREATE TABLE `orders_colocate` (

`o_orderkey` int(11) NULL COMMENT "",

`o_orderdate` date NULL COMMENT "",

`o_custkey` int(11) NULL COMMENT "",

`o_orderstatus` varchar(1) NULL COMMENT "",

`o_totalprice` decimal64(15, 2) NULL COMMENT "",

`o_orderpriority` varchar(15) NULL COMMENT "",

`o_clerk` varchar(15) NULL COMMENT "",

`o_shippriority` int(11) NULL COMMENT "",

`o_comment` varchar(79) NULL COMMENT ""

) ENGINE=OLAP

DUPLICATE KEY(`o_orderkey`, `o_orderdate`)

COMMENT "OLAP"

DISTRIBUTED BY HASH(`o_orderkey`) BUCKETS 96

PROPERTIES (

"replication_num" = "3",

"in_memory" = "false",

"colocate_with" = "group_tpch_50",

"storage_format" = "DEFAULT"

);

select o_orderpriority, count(*) as order_count

from lineitem_colocate

join orders_colocate on l_orderkey = o_orderkey

where

o_orderdate >= date '1993-07-01'

and o_orderdate < date '1993-07-01' + interval '3' month

and l_commitdate < l_receiptdate

group by o_orderpriority

order by o_orderpriority;

物化视图分析及优化

简介

物化视图采用空间换时间的设计思路,一张表可以创建多个物化视图,查询会自动命中最优的物化视图。物化视图不能通过名称直接查询,但是其在底层存储时与一般表无异,创建物化视图后基表中的数据会以异步的方式同步到其所有物化视图中。

目前物化视图支持的两种场景,当然也支持两种方式混合:

预聚合:

对明细表的任意维度组合进行预先聚合;

维度列变序:

采用新的维度列的排序方式,以便命中前缀查询条件。

注意:

- 创建了大量的物化视图,会导致数据导入速度过慢,并且部分物化视图的相互重复,查询频率极低,会有较高的查询延迟。

- 只有 明细模型 和 聚合模型支持创建物化视图 ,主键模型和更新模型 不支持 创建物化视图

案例

以下sql以SSB 1T测试数据集(lineorder_flat 474G)测试sql为例子创建物化视图优化。

以sum函数聚合为例

-- 原始sql

select LO_ORDERDATE,sum(LO_QUANTITY) from lineorder_flat group by LO_ORDERDATE;

--创建物化视图

CREATE MATERIALIZED VIEW sum_mv as select LO_ORDERDATE,sum(LO_QUANTITY) from lineorder_flat group by LO_ORDERDATE;

原始sql的执行时间为:27.61 sec

Profile

OLAP_SCAN_NODE (id=0):(Active: 12s132ms[12132328192ns], % non-child: 0.00%)

- Table: lineorder_flat

- Rollup: lineorder_flat

- BytesRead: 6.79 GB

- NumDiskAccess: 0

- PeakMemoryUsage: 0.00

- RowsRead: 1.457462453B (1457462453)

- RowsReturned: 1.457462453B (1457462453)

- RowsReturnedRate: 120.130483M /sec

- ScanTime: 13m9s

- ScannerThreadsInvoluntaryContextSwitches: 0

- ScannerThreadsTotalWallClockTime: 0ns

- MaterializeTupleTime(*): 0ns

- ScannerThreadsSysTime: 0ns

- ScannerThreadsUserTime: 0ns

- ScannerThreadsVoluntaryContextSwitches: 0

- TabletCount : 254

- TotalRawReadTime(*): 0ns

- TotalReadThroughput: 289.9573450088501 MB/sec

SCAN:(Active: 13m9s[789079370240ns], % non-child: 100.00%)

- CachedPagesNum: 547

- CompressedBytesRead: 1.04 GB

- CreateSegmentIter: 3.866ms

- IOTime: 13m2s

- PushdownPredicates: 0

- RawRowsRead: 1.457462453B (1457462453)

- ReadPagesNum: 111.772K (111772)

- SegmentInit: 14.478ms

- BitmapIndexFilter: 0ns

- BitmapIndexFilterRows: 0

- BloomFilterFilterRows: 0

- SegmentZoneMapFilterRows: 0

- ShortKeyFilterRows: 0

- ZoneMapIndexFilterRows: 0

- SegmentRead: 13m8s

- BlockFetch: 872.979ms

- BlockFetchCount: 355.948K (355948)

- BlockSeek: 13m7s

- BlockSeekCount: 355.948K (355948)

- ChunkCopy: 0ns

- DecompressT: 135.665ms

- DelVecFilterRows: 0

- IndexLoad: 0ns

- PredFilter: 0ns

- PredFilterRows: 0

- RowsetsReadCount: 508

- SegmentsReadCount: 728

- TotalColumnsDataPageCount: 111.772K (111772)

- UncompressedBytesRead: 1.09 GB

创建物化视图后执行时间为:0.96 sec

Profile

OLAP_SCAN_NODE (id=0):(Active: 525.174ms[525174514ns], % non-child: 95.03%)

- Table: lineorder_flat

- Rollup: sum_mv

- BytesRead: 488.32 KB

- NumDiskAccess: 0

- PeakMemoryUsage: 0.00

- RowsRead: 100.007K (100007)

- RowsReturned: 100.007K (100007)

- RowsReturnedRate: 190.426K /sec

- ScanTime: 15.646ms

- ScannerThreadsInvoluntaryContextSwitches: 0

- ScannerThreadsTotalWallClockTime: 0ns

- MaterializeTupleTime(*): 0ns

- ScannerThreadsSysTime: 0ns

- ScannerThreadsUserTime: 0ns

- ScannerThreadsVoluntaryContextSwitches: 0

- TabletCount : 294

- TotalRawReadTime(*): 0ns

- TotalReadThroughput: 349.794921875 KB/sec

SCAN:(Active: 14.862ms[14862553ns], % non-child: 2.77%)

- CachedPagesNum: 0

- CompressedBytesRead: 174.46 KB

- CreateSegmentIter: 25s53ms

- IOTime: 1.121ms

- PushdownPredicates: 0

- RawRowsRead: 100.007K (100007)

- ReadPagesNum: 588

- SegmentInit: 7.433ms

- BitmapIndexFilter: 0ns

- BitmapIndexFilterRows: 0

- BloomFilterFilterRows: 0

- SegmentZoneMapFilterRows: 0

- ShortKeyFilterRows: 0

- ZoneMapIndexFilterRows: 0

- SegmentRead: 7.124ms

- BlockFetch: 490.521us

- BlockFetchCount: 294

- BlockSeek: 6.225ms

- BlockSeekCount: 294

- ChunkCopy: 0ns

- DecompressT: 0ns

- DelVecFilterRows: 0

- IndexLoad: 0ns

- PredFilter: 0ns

- PredFilterRows: 0

- RowsetsReadCount: 588

- SegmentsReadCount: 294

- TotalColumnsDataPageCount: 588

- UncompressedBytesRead: 158.96 KB

通过以上profile可以看出,物化视图创建成功后进行查询时,olapscan节点:

-

- UncompressedBytesRead扫描数据量参数 变少,从GB级别达到KB级别,解压缩数据使用的时间更少。 -

-Table: lineorder_flat 扫描的表是同一张表 - 创建物化视图后

Rollup: sum_mv 为创建好的视图,代表查询命中视图,如果仍为源表名代表未命中物化视图 - -BytesRead BytesRead 读取的数据量变少

通过创建物化视图可以减少数据扫描量实现对查询的加速。

注意:物化视图创建过程为异步过程,数据量越大耗时越久,通过命令可以查看创建进度: SHOW ALTER MATERIALIZED VIEW FROM databaseName;

附件

lineorder_flat 建表语句

CREATE TABLE `lineorder_flat` (

`LO_ORDERDATE` date NOT NULL COMMENT "",

`LO_ORDERKEY` bigint(20) NOT NULL COMMENT "",

`LO_LINENUMBER` tinyint(4) NOT NULL COMMENT "",

`LO_CUSTKEY` int(11) NOT NULL COMMENT "",

`LO_PARTKEY` int(11) NOT NULL COMMENT "",

`LO_SUPPKEY` int(11) NOT NULL COMMENT "",

`LO_ORDERPRIORITY` varchar(100) NOT NULL COMMENT "",

`LO_SHIPPRIORITY` tinyint(4) NOT NULL COMMENT "",

`LO_QUANTITY` tinyint(4) NOT NULL COMMENT "",

`LO_EXTENDEDPRICE` int(11) NOT NULL COMMENT "",

`LO_ORDTOTALPRICE` int(11) NOT NULL COMMENT "",

`LO_DISCOUNT` tinyint(4) NOT NULL COMMENT "",

`LO_REVENUE` int(11) NOT NULL COMMENT "",

`LO_SUPPLYCOST` int(11) NOT NULL COMMENT "",

`LO_TAX` tinyint(4) NOT NULL COMMENT "",

`LO_COMMITDATE` date NOT NULL COMMENT "",

`LO_SHIPMODE` varchar(100) NOT NULL COMMENT "",

`C_NAME` varchar(100) NOT NULL COMMENT "",

`C_ADDRESS` varchar(100) NOT NULL COMMENT "",

`C_CITY` varchar(100) NOT NULL COMMENT "",

`C_NATION` varchar(100) NOT NULL COMMENT "",

`C_REGION` varchar(100) NOT NULL COMMENT "",

`C_PHONE` varchar(100) NOT NULL COMMENT "",

`C_MKTSEGMENT` varchar(100) NOT NULL COMMENT "",

`S_NAME` varchar(100) NOT NULL COMMENT "",

`S_ADDRESS` varchar(100) NOT NULL COMMENT "",

`S_CITY` varchar(100) NOT NULL COMMENT "",

`S_NATION` varchar(100) NOT NULL COMMENT "",

`S_REGION` varchar(100) NOT NULL COMMENT "",

`S_PHONE` varchar(100) NOT NULL COMMENT "",

`P_NAME` varchar(100) NOT NULL COMMENT "",

`P_MFGR` varchar(100) NOT NULL COMMENT "",

`P_CATEGORY` varchar(100) NOT NULL COMMENT "",

`P_BRAND` varchar(100) NOT NULL COMMENT "",

`P_COLOR` varchar(100) NOT NULL COMMENT "",

`P_TYPE` varchar(100) NOT NULL COMMENT "",

`P_SIZE` tinyint(4) NOT NULL COMMENT "",

`P_CONTAINER` varchar(100) NOT NULL COMMENT ""

) ENGINE=OLAP

DUPLICATE KEY(`LO_ORDERDATE`, `LO_ORDERKEY`)

COMMENT "OLAP"

PARTITION BY RANGE(`LO_ORDERDATE`)

(PARTITION p1 VALUES [('0000-01-01'), ('1993-01-01')),

PARTITION p2 VALUES [('1993-01-01'), ('1994-01-01')),

PARTITION p3 VALUES [('1994-01-01'), ('1995-01-01')),

PARTITION p4 VALUES [('1995-01-01'), ('1996-01-01')),

PARTITION p5 VALUES [('1996-01-01'), ('1997-01-01')),

PARTITION p6 VALUES [('1997-01-01'), ('1998-01-01')),

PARTITION p7 VALUES [('1998-01-01'), ('1999-01-01')))

DISTRIBUTED BY HASH(`LO_ORDERKEY`) BUCKETS 150

PROPERTIES (

"replication_num" = "1",

"in_memory" = "false",

"storage_format" = "DEFAULT"

);

适用场景

- min/max

- Count

- bitmap_union 精确去重

- hll_union

- 调整列的顺序

真是太详细了,让优化SR SQL更有方向了,非常不错。

商业版本里各个算子的时间百分比是怎么计算的?因为Fragment,pipeline中算子都有一定程度的并行度, 所以这个百分比的定义是啥,有这么直接的数量大就瓶颈的直接关系?

healthy_report 这个工具的源代码能共享出来吗?