【详述】存算分离集群,无法新增分区, 无法创建表

【背景】

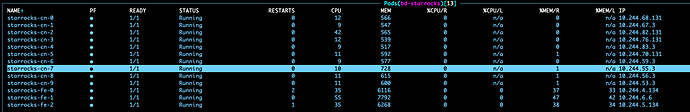

集群中没有 be 节点, 只有 CN 节点,

因为是个给跑批任务用, 每天所有 CN 节点都缩容, 跑任务是再全部开启(10 个 CN)

场景一: 从 3.3.22 升到 3.4.10 再升到 3.5.13 后发现的问题

场景二: 部署一套新的3.5.13的存算分离集群, 也会有同样的问题

*测试过 3.3.22 / 3.4.10版本, 没有此问题*

【是否存算分离】是

【StarRocks版本】3.5.13 / 3.5.14 (2个版本都尝试了, 一样的问题)

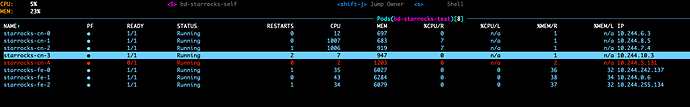

【集群规模】3fe + 10cn

【联系方式】 StarRocks -存算分离1群------ 可以自然点嘛

问题复现:

表结构, 空表

CREATE TABLE dmp.adt_ip_country_source (

`__dt` datetime NOT NULL COMMENT "数据时间,精确到小时",

`ip` varchar(50) NOT NULL COMMENT "ip",

`country` varchar(3) NOT NULL COMMENT "国家",

`source` varchar(16) NOT NULL COMMENT "IP 来源"

) ENGINE=OLAP

COMMENT "IP 国家表, 用于 IP 活跃度统计"

PARTITION BY date_trunc('hour', __dt)

DISTRIBUTED BY HASH(`ip`, `country`) BUCKETS 1

PROPERTIES (

"compression" = "LZ4",

"datacache.enable" = "true",

"datacache.partition_duration" = "1 days",

"enable_async_write_back" = "false",

"partition_live_number" = "840",

"replication_num" = "1",

"storage_volume" = "volume_hdfs"

);

当执行如下语句时(正常业务场景大都是 insert overwrie 或者 insert into, 概率不低,但也不好复现, 使用这种方式直接复现)

EXPLAIN

ANALYZE INSERT INTO adt_ip_country_source (__dt,ip,country,source)

SELECT DATE_ADD('2026-02-02 00:00:00', INTERVAL d hour),'','',''

FROM table(generate_series(0, 23)) AS g(d);

当连续 2 次执行 手动预见分区语句(有时候 1 次就能触发),

超过 5 分钟都不返回,

新开客户端链接到集群, 查看分区已经建立

FE日志中也显示分区建立成功

此时, 再去对任何按天分区表进行插入操作,

插入一个不存在的分区数据

例如, 已有分区’2026-03-01’, 插入一个’202603-02’ 分区的数据

INSERT INTO dmp.adt_ip_country_source SELECT '2026-03-01 02:00:00','1.1.1.1','CHI','xxx';

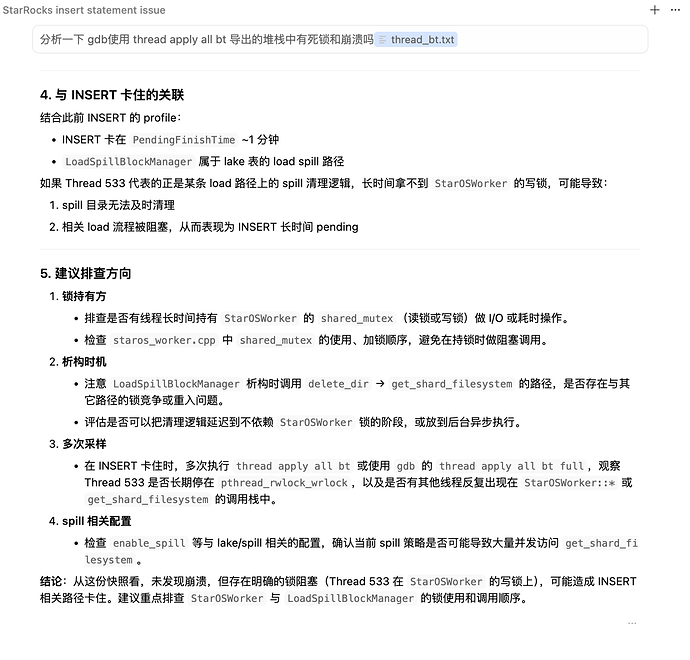

此时插入无法成功, 提示建立新分区超时(调大超时时间也无效),

此时如果执行创建表的操作, 同样会超时(调大超时时间也无效),

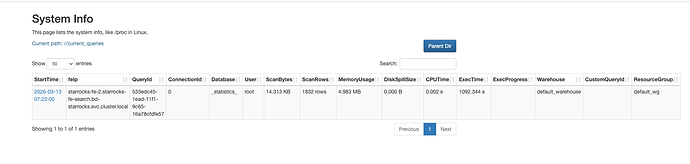

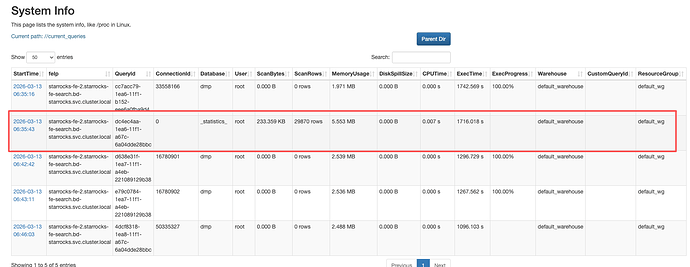

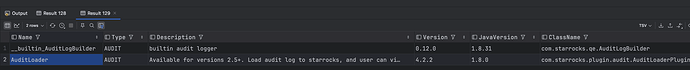

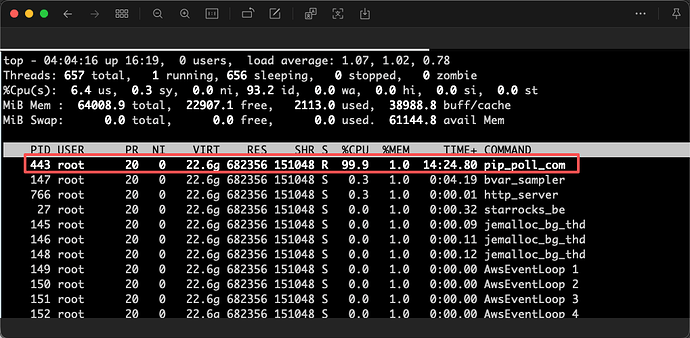

此时ui页面上会出现一个’statistics’库的查询任务 也是一直卡住, 不结束,

这时,

关闭所有 CN 节点, 等待 10 分钟左右,

重启 所有 CN 节点

同时重启 fe 的 leader 节点,

才能恢复正常,

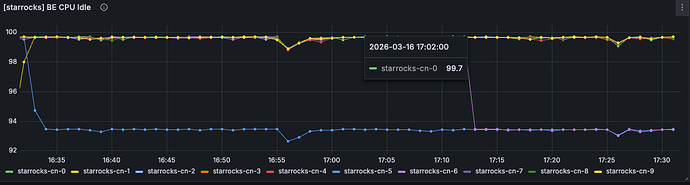

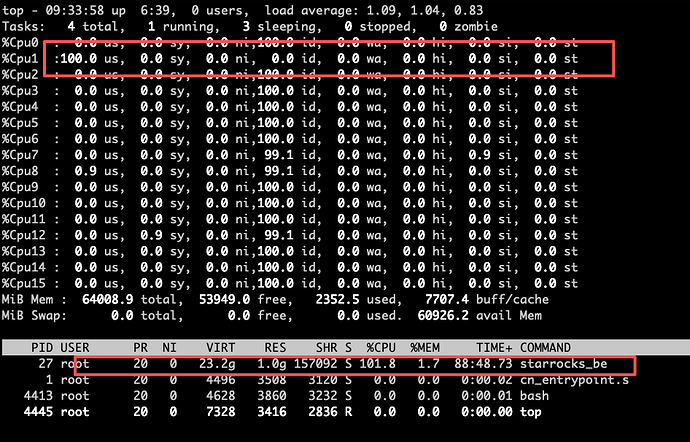

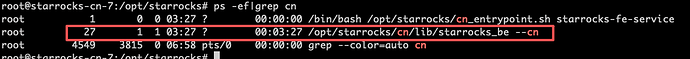

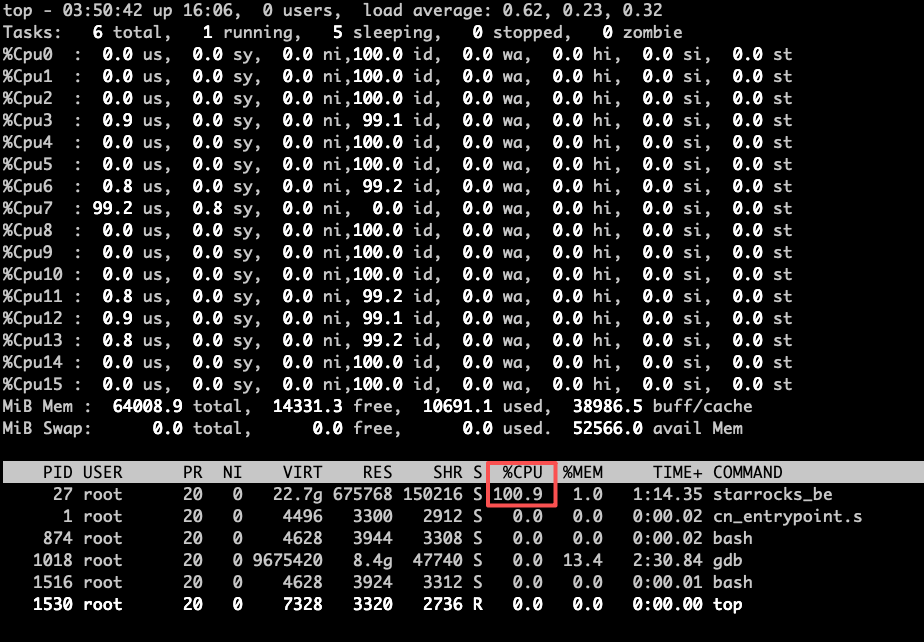

另外关闭 CN 节点时, 发现有一个 CN 节点比其他的关闭的慢, 这个节点就是执行插入数据时报出来超时的那个节点

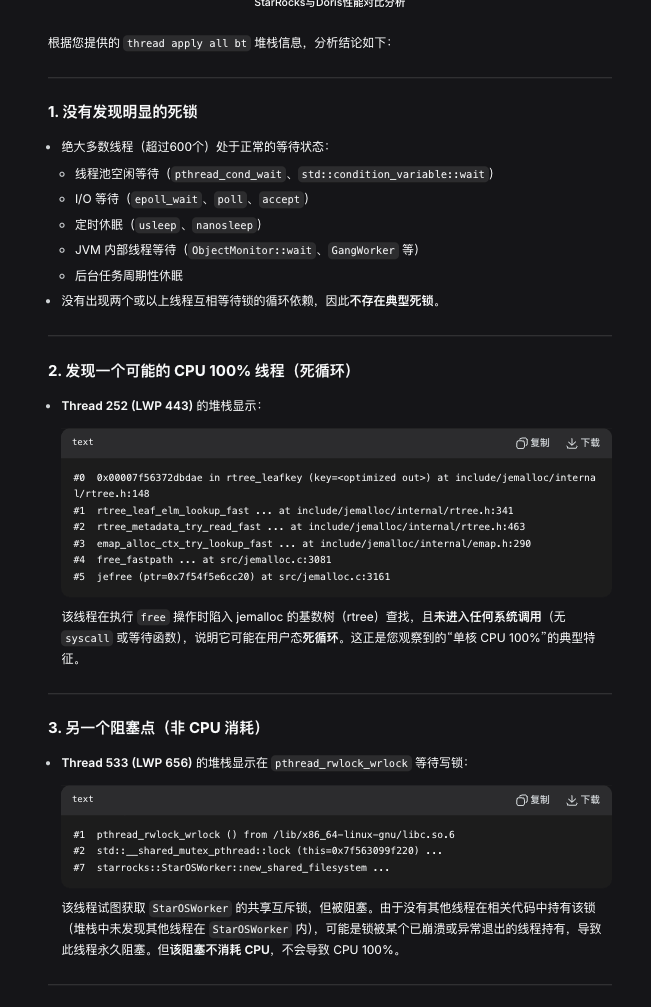

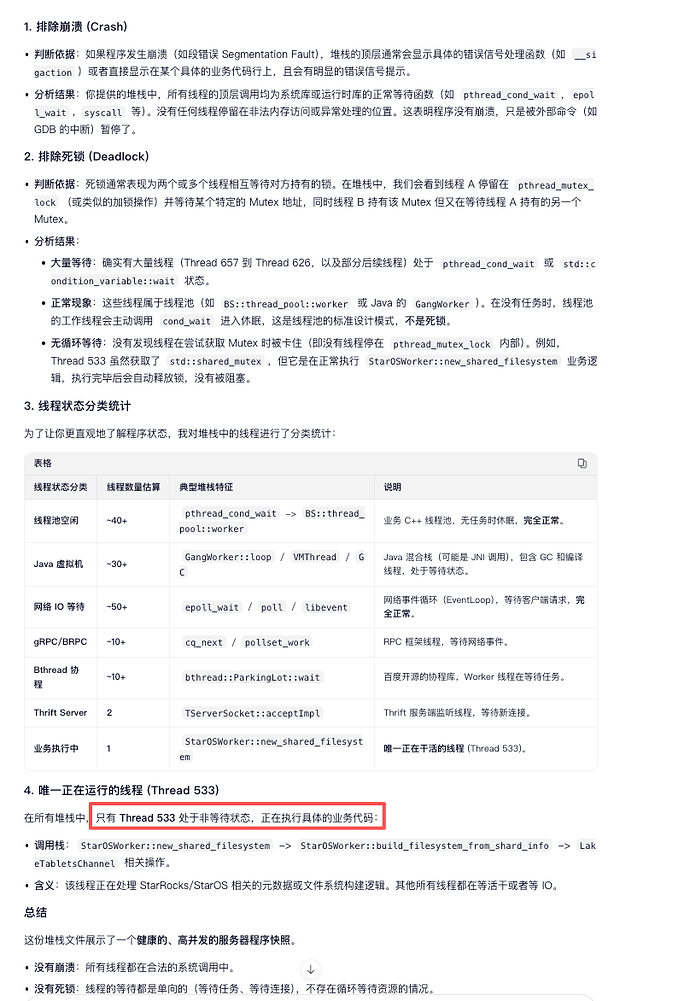

预见分区时fe日志如下:

10110274394128384; versionTxnType: TXN_NORMAL; storageDataSize: 0; storageRowCount: 0; storageReplicaCount: 1; bucketNum: 1;

2026-03-13 09:03:37.802Z INFO (thrift-server-pool-44|1740) [TabletTaskExecutor.buildPartitionsSequentially():107] build partitions sequentially, send task one by one, all tasks timeout 480s

2026-03-13 09:03:38.826Z INFO (thrift-server-pool-44|1740) [TabletTaskExecutor.buildPartitionsSequentially():110] build partitions sequentially, all tasks finished, took 1028ms

2026-03-13 09:03:38.839Z INFO (thrift-server-pool-44|1740) [LocalMetastore.addRangePartitionLog():1106] succeed in creating partition[10038200], name: p2026020200, temp: false

2026-03-13 09:03:38.839Z INFO (thrift-server-pool-44|1740) [LocalMetastore.addRangePartitionLog():1106] succeed in creating partition[10038203], name: p2026020201, temp: false

2026-03-13 09:03:38.839Z INFO (thrift-server-pool-44|1740) [LocalMetastore.addRangePartitionLog():1106] succeed in creating partition[10038206], name: p2026020202, temp: false

2026-03-13 09:03:38.839Z INFO (thrift-server-pool-44|1740) [LocalMetastore.addRangePartitionLog():1106] succeed in creating partition[10038209], name: p2026020203, temp: false

2026-03-13 09:03:38.839Z INFO (thrift-server-pool-44|1740) [LocalMetastore.addRangePartitionLog():1106] succeed in creating partition[10038212], name: p2026020204, temp: false

2026-03-13 09:03:38.839Z INFO (thrift-server-pool-44|1740) [LocalMetastore.addRangePartitionLog():1106] succeed in creating partition[10038215], name: p2026020205, temp: false

2026-03-13 09:03:38.839Z INFO (thrift-server-pool-44|1740) [LocalMetastore.addRangePartitionLog():1106] succeed in creating partition[10038218], name: p2026020206, temp: false

2026-03-13 09:03:38.839Z INFO (thrift-server-pool-44|1740) [LocalMetastore.addRangePartitionLog():1106] succeed in creating partition[10038221], name: p2026020207, temp: false

2026-03-13 09:03:38.839Z INFO (thrift-server-pool-44|1740) [LocalMetastore.addRangePartitionLog():1106] succeed in creating partition[10038224], name: p2026020208, temp: false

2026-03-13 09:03:38.839Z INFO (thrift-server-pool-44|1740) [LocalMetastore.addRangePartitionLog():1106] succeed in creating partition[10038227], name: p2026020209, temp: false

2026-03-13 09:03:38.839Z INFO (thrift-server-pool-44|1740) [LocalMetastore.addRangePartitionLog():1106] succeed in creating partition[10038230], name: p2026020210, temp: false

2026-03-13 09:03:38.839Z INFO (thrift-server-pool-44|1740) [LocalMetastore.addRangePartitionLog():1106] succeed in creating partition[10038233], name: p2026020211, temp: false

2026-03-13 09:03:38.839Z INFO (thrift-server-pool-44|1740) [LocalMetastore.addRangePartitionLog():1106] succeed in creating partition[10038236], name: p2026020212, temp: false

2026-03-13 09:03:38.839Z INFO (thrift-server-pool-44|1740) [LocalMetastore.addRangePartitionLog():1106] succeed in creating partition[10038239], name: p2026020213, temp: false

2026-03-13 09:03:38.839Z INFO (thrift-server-pool-44|1740) [LocalMetastore.addRangePartitionLog():1106] succeed in creating partition[10038242], name: p2026020214, temp: false

2026-03-13 09:03:38.839Z INFO (thrift-server-pool-44|1740) [LocalMetastore.addRangePartitionLog():1106] succeed in creating partition[10038245], name: p2026020215, temp: false

2026-03-13 09:03:38.839Z INFO (thrift-server-pool-44|1740) [LocalMetastore.addRangePartitionLog():1106] succeed in creating partition[10038248], name: p2026020216, temp: false

2026-03-13 09:03:38.839Z INFO (thrift-server-pool-44|1740) [LocalMetastore.addRangePartitionLog():1106] succeed in creating partition[10038251], name: p2026020217, temp: false

2026-03-13 09:03:38.839Z INFO (thrift-server-pool-44|1740) [LocalMetastore.addRangePartitionLog():1106] succeed in creating partition[10038254], name: p2026020218, temp: false

2026-03-13 09:03:38.839Z INFO (thrift-server-pool-44|1740) [LocalMetastore.addRangePartitionLog():1106] succeed in creating partition[10038257], name: p2026020219, temp: false

2026-03-13 09:03:38.839Z INFO (thrift-server-pool-44|1740) [LocalMetastore.addRangePartitionLog():1106] succeed in creating partition[10038260], name: p2026020220, temp: false

2026-03-13 09:03:38.839Z INFO (thrift-server-pool-44|1740) [LocalMetastore.addRangePartitionLog():1106] succeed in creating partition[10038263], name: p2026020221, temp: false

2026-03-13 09:03:38.840Z INFO (thrift-server-pool-44|1740) [LocalMetastore.addRangePartitionLog():1106] succeed in creating partition[10038266], name: p2026020222, temp: false

2026-03-13 09:03:38.840Z INFO (thrift-server-pool-44|1740) [LocalMetastore.addRangePartitionLog():1106] succeed in creating partition[10038269], name: p2026020223, temp: false

2026-03-13 09:03:46.953Z INFO (star_os_checkpoint_controller|133) [BDBJEJournal.getFinalizedJournalId():272] database names: 117237666

2026-03-13 09:03:46.953Z INFO (star_os_checkpoint_controller|133) [CheckpointController.runCheckpointControllerWithIds():152] checkpoint imageJournalId 117237665, logJournalId 0

2026-03-13 09:03:55.623Z INFO (thrift-server-pool-50|1746) [FrontendServiceImpl.loadTxnBegin():1178] receive txn begin request, db: sr_audit, tbl: audit, label: audit_20260313_090355_starrocks-fe-1_starrocks-fe-search_bd-starrocks_svc_cluster_local_9010, backend: 10.244.4.130

2026-03-13 09:03:55.624Z INFO (thrift-server-pool-50|1746) [StreamLoadMgr.beginLoadTaskFromBackend():175] STREAM_LOAD_TASK=10038272, msg={create load task}

2026-03-13 09:03:55.624Z INFO (thrift-server-pool-50|1746) [DatabaseTransactionMgr.beginTransaction():189] begin transaction: txn_id: 1594118 with label audit_20260313_090355_starrocks-fe-1_starrocks-fe-search_bd-starrocks_svc_cluster_local_9010 from coordinator BE: 10.244.4.130, listner id: 10038272

2026-03-13 09:03:55.624Z INFO (thrift-server-pool-50|1746) [StreamLoadTask.beginTxn():322] stream load audit_20260313_090355_starrocks-fe-1_starrocks-fe-search_bd-starrocks_svc_cluster_local_9010 channel_id 0 begin. db: sr_audit, tbl: audit, txn_id: 1594118

2026-03-13 09:03:55.627Z INFO (thrift-server-pool-48|1744) [DefaultCoordinator.<init>():258] Execution Profile: 054dc608-3acf-54f8-2954-8543a0ddeb98

2026-03-13 09:03:58.652Z INFO (nioEventLoopGroup-5-13|1306) [LoadAction.processNormalStreamLoad():205] redirect load action to destination=TNetworkAddress(hostname:starrocks-cn-0.starrocks-cn-search.bd-starrocks.svc.cluster.local, port:8040), db: sr_audit, tbl: audit, label: audit_20260313_090358_starrocks-fe-2_starrocks-fe-search_bd-starrocks_svc_cluster_local_9010, warehouse: default_warehouse

2026-03-13 09:03:58.654Z INFO (thrift-server-pool-24|1503) [FrontendServiceImpl.loadTxnBegin():1178] receive txn begin request, db: sr_audit, tbl: audit, label: audit_20260313_090358_starrocks-fe-2_starrocks-fe-search_bd-starrocks_svc_cluster_local_9010, backend: 10.244.244.4

2026-03-13 09:03:58.654Z INFO (thrift-server-pool-24|1503) [StreamLoadMgr.beginLoadTaskFromBackend():175] STREAM_LOAD_TASK=10038273, msg={create load task}

2026-03-13 09:03:58.655Z INFO (thrift-server-pool-24|1503) [DatabaseTransactionMgr.beginTransaction():189] begin transaction: txn_id: 1594119 with label audit_20260313_090358_starrocks-fe-2_starrocks-fe-search_bd-starrocks_svc_cluster_local_9010 from coordinator BE: 10.244.244.4, listner id: 10038273

2026-03-13 09:03:58.655Z INFO (thrift-server-pool-24|1503) [StreamLoadTask.beginTxn():322] stream load audit_20260313_090358_starrocks-fe-2_starrocks-fe-search_bd-starrocks_svc_cluster_local_9010 channel_id 0 begin. db: sr_audit, tbl: audit, txn_id: 1594119

2026-03-13 09:03:58.658Z INFO (thrift-server-pool-23|1502) [DefaultCoordinator.<init>():258] Execution Profile: 60474d49-de9c-cdec-2343-04c65f05bb88

2026-03-13 09:04:00.882Z INFO (TaskCleaner|103) [TaskManager.dropTasks():377] drop tasks:[]

2026-03-13 09:04:02.882Z INFO (thrift-server-pool-91|2485) [FrontendServiceImpl.forward():1120] receive forwarded stmt 86 from FE: 10.244.136.144

2026-03-13 09:04:02.883Z INFO (thrift-server-pool-91|2485) [DatabaseTransactionMgr.beginTransaction():189] begin transaction: txn_id: 1594120 with label delete_94c40d09-1ebb-11f1-9d2e-3e2f967789fd from coordinator FE: starrocks-fe-2.starrocks-fe-search.bd-starrocks.svc.cluster.local, listner id: -1

2026-03-13 09:04:02.894Z INFO (thrift-server-pool-91|2485) [DefaultCoordinator.prepareProfile():614] dispatch load job: 94c40d09-1ebb-11f1-9d2e-3e2f967789fd to [10038065, 10038064, 10038147, 10038149, 10038150]

2026-03-13 09:04:02.911Z INFO (PUBLISH_VERSION|25) [PublishVersionDaemon.publishLakeTransactionAsync():473] start publish lake db:10031145 table:10034138 txn:1594120

2026-03-13 09:04:02.914Z INFO (thrift-server-pool-91|2485) [DatabaseTransactionMgr.commitPreparedTransaction():546] transaction:[TransactionState. txn_id: 1594120, label: delete_94c40d09-1ebb-11f1-9d2e-3e2f967789fd, db id: 10031145, table id list: 10034138, callback id: [-1, 10038274], coordinator: FE: starrocks-fe-2.starrocks-fe-search.bd-starrocks.svc.cluster.local, transaction status: COMMITTED, error replicas num: 0, unknown replicas num: 0, prepare time: 1773392642883, write end time: 1773392642909, allow commit time: 1773392642909, commit time: 1773392642909, finish time: -1, write cost: 26ms, wait for publish cost: 2ms, reason: , attachment: com.starrocks.transaction.InsertTxnCommitAttachment@30626099, partition commit info:[]] successfully committed

2026-03-13 09:04:02.918Z INFO (PUBLISH_VERSION|25) [DatabaseTransactionMgr.finishTransaction():1358] finish transaction TransactionState. txn_id: 1594120, label: delete_94c40d09-1ebb-11f1-9d2e-3e2f967789fd, db id: 10031145, table id list: 10034138, callback id: [-1, 10038274], coordinator: FE: starrocks-fe-2.starrocks-fe-search.bd-starrocks.svc.cluster.local, transaction status: VISIBLE, error replicas num: 0, unknown replicas num: 0, prepare time: 1773392642883, write end time: 1773392642909, allow commit time: 1773392642909, commit time: 1773392642909, finish time: 1773392642914, write cost: 26ms, wait for publish cost: 2ms, finish txn cost: 3ms, publish total cost: 5ms, total cost: 31ms, reason: , attachment: com.starrocks.transaction.InsertTxnCommitAttachment@30626099, partition commit info:[] successfully

2026-03-13 09:04:02.925Z INFO (thrift-server-pool-91|2485) [FrontendServiceImpl.forward():1120] receive forwarded stmt 87 from FE: 10.244.136.144

2026-03-13 09:04:02.926Z INFO (thrift-server-pool-91|2485) [DatabaseTransactionMgr.beginTransaction():189] begin transaction: txn_id: 1594121 with label delete_94cb11ec-1ebb-11f1-9d2e-3e2f967789fd from coordinator FE: starrocks-fe-2.starrocks-fe-search.bd-starrocks.svc.cluster.local, listner id: -1

2026-03-13 09:04:02.936Z INFO (thrift-server-pool-91|2485) [DefaultCoordinator.prepareProfile():614] dispatch load job: 94cb11ec-1ebb-11f1-9d2e-3e2f967789fd to [10038065, 10038064, 10038147, 10038149, 10038150]

2026-03-13 09:04:02.954Z INFO (thrift-server-pool-91|2485) [DatabaseTransactionMgr.commitPreparedTransaction():546] transaction:[TransactionState. txn_id: 1594121, label: delete_94cb11ec-1ebb-11f1-9d2e-3e2f967789fd, db id: 10031145, table id list: 10034138, callback id: [-1, 10038275], coordinator: FE: starrocks-fe-2.starrocks-fe-search.bd-starrocks.svc.cluster.local, transaction status: COMMITTED, error replicas num: 0, unknown replicas num: 0, prepare time: 1773392642926, write end time: 1773392642950, allow commit time: 1773392642950, commit time: 1773392642950, finish time: -1, write cost: 24ms, reason: , attachment: com.starrocks.transaction.InsertTxnCommitAttachment@7c5b9579, partition commit info:[]] successfully committed

2026-03-13 09:04:02.958Z INFO (PUBLISH_VERSION|25) [PublishVersionDaemon.publishLakeTransactionAsync():473] start publish lake db:10031145 table:10034138 txn:1594121

2026-03-13 09:04:02.962Z INFO (PUBLISH_VERSION|25) [DatabaseTransactionMgr.finishTransaction():1358] finish transaction TransactionState. txn_id: 1594121, label: delete_94cb11ec-1ebb-11f1-9d2e-3e2f967789fd, db id: 10031145, table id list: 10034138, callback id: [-1, 10038275], coordinator: FE: starrocks-fe-2.starrocks-fe-search.bd-starrocks.svc.cluster.local, transaction status: VISIBLE, error replicas num: 0, unknown replicas num: 0, prepare time: 1773392642926, write end time: 1773392642950, allow commit time: 1773392642950, commit time: 1773392642950, finish time: 1773392642959, write cost: 24ms, wait for publish cost: 8ms, finish txn cost: 1ms, publish total cost: 9ms, total cost: 33ms, reason: , attachment: com.starrocks.transaction.InsertTxnCommitAttachment@7c5b9579, partition commit info:[] successfully

2026-03-13 09:04:03.674Z INFO (PredicateColumnsDaemonThread|152) [DatabaseTransactionMgr.beginTransaction():189] begin transaction: txn_id: 1594122 with label delete_953d34c2-1ebb-11f1-a066-9ac14c6ab7cf from coordinator FE: starrocks-fe-2.starrocks-fe-search.bd-starrocks.svc.cluster.local, listner id: -1

2026-03-13 09:04:03.685Z INFO (PredicateColumnsDaemonThread|152) [DefaultCoordinator.prepareProfile():614] dispatch load job: 953d34c2-1ebb-11f1-a066-9ac14c6ab7cf to [10038065, 10038064, 10038147, 10038149, 10038150]

2026-03-13 09:04:03.698Z INFO (PUBLISH_VERSION|25) [PublishVersionDaemon.publishLakeTransactionAsync():473] start publish lake db:10031145 table:10034138 txn:1594122

2026-03-13 09:04:03.700Z INFO (PredicateColumnsDaemonThread|152) [DatabaseTransactionMgr.commitPreparedTransaction():546] transaction:[TransactionState. txn_id: 1594122, label: delete_953d34c2-1ebb-11f1-a066-9ac14c6ab7cf, db id: 10031145, table id list: 10034138, callback id: [-1, 10038276], coordinator: FE: starrocks-fe-2.starrocks-fe-search.bd-starrocks.svc.cluster.local, transaction status: COMMITTED, error replicas num: 0, unknown replicas num: 0, prepare time: 1773392643674, write end time: 1773392643695, allow commit time: 1773392643695, commit time: 1773392643695, finish time: -1, write cost: 21ms, wait for publish cost: 3ms, reason: , attachment: com.starrocks.transaction.InsertTxnCommitAttachment@7e22e18a, partition commit info:[]] successfully committed

2026-03-13 09:04:03.704Z INFO (PUBLISH_VERSION|25) [DatabaseTransactionMgr.finishTransaction():1358] finish transaction TransactionState. txn_id: 1594122, label: delete_953d34c2-1ebb-11f1-a066-9ac14c6ab7cf, db id: 10031145, table id list: 10034138, callback id: [-1, 10038276], coordinator: FE: starrocks-fe-2.starrocks-fe-search.bd-starrocks.svc.cluster.local, transaction status: VISIBLE, error replicas num: 0, unknown replicas num: 0, prepare time: 1773392643674, write end time: 1773392643695, allow commit time: 1773392643695, commit time: 1773392643695, finish time: 1773392643700, write cost: 21ms, wait for publish cost: 3ms, finish txn cost: 2ms, publish total cost: 5ms, total cost: 26ms, reason: , attachment: com.starrocks.transaction.InsertTxnCommitAttachment@7e22e18a, partition commit info:[] successfully

2026-03-13 09:04:03.708Z INFO (PredicateColumnsDaemonThread|152) [DatabaseTransactionMgr.beginTransaction():189] begin transaction: txn_id: 1594123 with label delete_95428bf5-1ebb-11f1-a066-9ac14c6ab7cf from coordinator FE: starrocks-fe-2.starrocks-fe-search.bd-starrocks.svc.cluster.local, listner id: -1

2026-03-13 09:04:03.719Z INFO (PredicateColumnsDaemonThread|152) [DefaultCoordinator.prepareProfile():614] dispatch load job: 95428bf5-1ebb-11f1-a066-9ac14c6ab7cf to [10038065, 10038064, 10038147, 10038149, 10038150]

2026-03-13 09:04:03.733Z INFO (PredicateColumnsDaemonThread|152) [DatabaseTransactionMgr.commitPreparedTransaction():546] transaction:[TransactionState. txn_id: 1594123, label: delete_95428bf5-1ebb-11f1-a066-9ac14c6ab7cf, db id: 10031145, table id list: 10034138, callback id: [-1, 10038277], coordinator: FE: starrocks-fe-2.starrocks-fe-search.bd-starrocks.svc.cluster.local, transaction status: COMMITTED, error replicas num: 0, unknown replicas num: 0, prepare time: 1773392643709, write end time: 1773392643729, allow commit time: 1773392643729, commit time: 1773392643729, finish time: -1, write cost: 20ms, reason: , attachment: com.starrocks.transaction.InsertTxnCommitAttachment@1c83f656, partition commit info:[]] successfully committed

2026-03-13 09:04:03.734Z INFO (PUBLISH_VERSION|25) [PublishVersionDaemon.publishLakeTransactionAsync():473] start publish lake db:10031145 table:10034138 txn:1594123

2026-03-13 09:04:03.738Z INFO (PUBLISH_VERSION|25) [DatabaseTransactionMgr.finishTransaction():1358] finish transaction TransactionState. txn_id: 1594123, label: delete_95428bf5-1ebb-11f1-a066-9ac14c6ab7cf, db id: 10031145, table id list: 10034138, callback id: [-1, 10038277], coordinator: FE: starrocks-fe-2.starrocks-fe-search.bd-starrocks.svc.cluster.local, transaction status: VISIBLE, error replicas num: 0, unknown replicas num: 0, prepare time: 1773392643709, write end time: 1773392643729, allow commit time: 1773392643729, commit time: 1773392643729, finish time: 1773392643734, write cost: 20ms, wait for publish cost: 5ms, publish total cost: 5ms, total cost: 25ms, reason: , attachment: com.starrocks.transaction.InsertTxnCommitAttachment@1c83f656, partition commit info:[] successfully

2026-03-13 09:04:03.742Z INFO (PredicateColumnsDaemonThread|152) [PredicateColumnsStorage.vacuum():272] vacuum column usage from storage before 2026-03-12T09:04:03.672985121

2026-03-13 09:04:03.742Z INFO (PredicateColumnsDaemonThread|152) [PredicateColumnsStorage.persist():210] persist 0 diffed predicate columns elapsed 20.11 μs, update lastPersist to 2026-03-13T09:04:03.742481716

2026-03-13 09:04:07.615Z INFO (Load history syncer|40) [DatabaseTransactionMgr.beginTransaction():189] begin transaction: txn_id: 1594124 with label insert_9796b52e-1ebb-11f1-a066-9ac14c6ab7cf from coordinator FE: starrocks-fe-2.starrocks-fe-search.bd-starrocks.svc.cluster.local, listner id: -1

2026-03-13 09:04:07.636Z INFO (Load history syncer|40) [DefaultCoordinator.prepareProfile():614] dispatch load job: 9796b52e-1ebb-11f1-a066-9ac14c6ab7cf to [10038065, 10038064, 10038147, 10038149, 10038150]

2026-03-13 09:04:16.182Z INFO (global_state_checkpoint_controller|134) [BDBJEJournal.getFinalizedJournalId():272] database names: 20321977

2026-03-13 09:04:16.182Z INFO (global_state_checkpoint_controller|134) [CheckpointController.runCheckpointControllerWithIds():152] checkpoint imageJournalId 20321976, logJournalId 0

2026-03-13 09:04:19.211Z INFO (MemoryUsageTracker|58) [MemoryUsageTracker.trackMemory():165] (0ms) Module Agent - AgentTaskTracker estimated 0B of memory. Contains AgentTask with 0 object(s).

2026-03-13 09:04:19.211Z INFO (MemoryUsageTracker|58) [MemoryUsageTracker.trackMemory():165] (0ms) Module Backup - BackupHandler estimated 0B of memory. Contains BackupOrRestoreJob with 0 object(s).

2026-03-13 09:04:19.212Z INFO (MemoryUsageTracker|58) [MemoryUsageTracker.trackMemory():165] (0ms) Module Compaction - CompactionMgr estimated 181.6KB of memory. Contains PartitionStats with 3875 object(s).

2026-03-13 09:04:19.212Z INFO (MemoryUsageTracker|58) [MemoryUsageTracker.trackMemory():165] (0ms) Module Coordinator - QeProcessorImpl estimated 160B of memory. Contains QueryCoordinator with 4 object(s).

2026-03-13 09:04:19.212Z INFO (MemoryUsageTracker|58) [MemoryUsageTracker.trackMemory():165] (0ms) Module Delete - DeleteMgr estimated 1.2KB of memory. Contains DeleteInfo with 22 object(s). DeleteJob with 0 object(s).

2026-03-13 09:04:19.212Z INFO (MemoryUsageTracker|58) [MemoryUsageTracker.trackMemory():165] (0ms) Module Dict - CacheDictManager estimated 96B of memory. Contains ColumnDict with 3 object(s).

2026-03-13 09:04:19.212Z INFO (MemoryUsageTracker|58) [MemoryUsageTracker.trackMemory():165] (0ms) Module Export - ExportMgr estimated 0B of memory. Contains ExportJob with 0 object(s).

2026-03-13 09:04:19.213Z INFO (MemoryUsageTracker|58) [MemoryUsageTracker.trackMemory():165] (0ms) Module Load - InsertOverwriteJobMgr estimated 0B of memory. Contains insertOverwriteJobs with 0 object(s).

2026-03-13 09:04:19.213Z INFO (MemoryUsageTracker|58) [MemoryUsageTracker.trackMemory():165] (0ms) Module Load - LoadMgr estimated 20.2KB of memory. Contains LoadJob with 96 object(s).

2026-03-13 09:04:19.213Z INFO (MemoryUsageTracker|58) [MemoryUsageTracker.trackMemory():165] (0ms) Module Load - RoutineLoadMgr estimated 0B of memory. Contains RoutineLoad with 0 object(s).

2026-03-13 09:04:19.213Z INFO (MemoryUsageTracker|58) [MemoryUsageTracker.trackMemory():165] (0ms) Module Load - StreamLoadMgr estimated 3.8KB of memory. Contains StreamLoad with 14 object(s).

2026-03-13 09:04:19.213Z INFO (MemoryUsageTracker|58) [MemoryUsageTracker.trackMemory():165] (0ms) Module LocalMetastore - LocalMetastore estimated 628.9KB of memory. Contains Partition with 4025 object(s).

2026-03-13 09:04:19.213Z INFO (MemoryUsageTracker|58) [MemoryUsageTracker.trackMemory():165] (0ms) Module MV - MVTimelinessMgr estimated 0B of memory. Contains mvTimelinessMap with 0 object(s).

2026-03-13 09:04:19.213Z INFO (MemoryUsageTracker|58) [MemoryUsageTracker.trackMemory():165] (0ms) Module Profile - ProfileManager estimated 744B of memory. Contains QueryProfile with 31 object(s).

2026-03-13 09:04:19.213Z INFO (MemoryUsageTracker|58) [MemoryUsageTracker.trackMemory():165] (0ms) Module Query - QueryTracker estimated 0B of memory. Contains QueryDetail with 0 object(s).

2026-03-13 09:04:19.214Z INFO (MemoryUsageTracker|58) [MemoryUsageTracker.trackMemory():165] (0ms) Module Report - ReportHandler estimated 0B of memory. Contains PendingTask with 0 object(s). ReportQueue with 0 object(s).

2026-03-13 09:04:19.214Z INFO (MemoryUsageTracker|58) [MemoryUsageTracker.trackMemory():165] (0ms) Module Statistics - CachedStatisticStorage estimated 128B of memory. Contains TableStats with 2 object(s). ColumnStats with 4 object(s). PartitionStats with 0 object(s). HistogramStats with 0 object(s). ConnectorTableStats with 0 object(s). ConnectorHistogramStats with 0 object(s). MultiColumnCombinedStats with 1 object(s).

2026-03-13 09:04:19.214Z INFO (MemoryUsageTracker|58) [MemoryUsageTracker.trackMemory():165] (0ms) Module TabletInvertedIndex - TabletInvertedIndex estimated 9MB of memory. Contains TabletMeta with 131883 object(s). TabletCount with 131883 object(s). ReplicateCount with 0 object(s).

2026-03-13 09:04:19.214Z INFO (MemoryUsageTracker|58) [MemoryUsageTracker.trackMemory():165] (0ms) Module Task - TaskManager estimated 23.6KB of memory. Contains Task with 233 object(s).

2026-03-13 09:04:19.214Z INFO (MemoryUsageTracker|58) [MemoryUsageTracker.trackMemory():165] (0ms) Module Task - TaskRunManager estimated 0B of memory. Contains PendingTaskRun with 0 object(s). RunningTaskRun with 0 object(s). HistoryTaskRun with 0 object(s).

2026-03-13 09:04:19.214Z INFO (MemoryUsageTracker|58) [MemoryUsageTracker.trackMemory():165] (0ms) Module Transaction - GlobalTransactionMgr estimated 220.7KB of memory. Contains Txn with 879 object(s). TxnCallbackCount with 4 object(s).

2026-03-13 09:04:19.215Z INFO (MemoryUsageTracker|58) [MemoryUsageTracker.trackMemory():112] total tracked memory: 10.1MB, jvm: Process used: 8GB, heap used: 1.6GB, non heap used: 216.5MB, direct buffer used: 95MB

2026-03-13 09:04:35.287Z INFO (thrift-server-pool-95|2534) [FrontendServiceImpl.forward():1120] receive forwarded stmt 131 from FE: 10.244.136.13

2026-03-13 09:04:35.287Z INFO (thrift-server-pool-95|2534) [DatabaseTransactionMgr.beginTransaction():189] begin transaction: txn_id: 1594125 with label delete_a814d0fb-1ebb-11f1-a729-fa8d85fb3e18 from coordinator FE: starrocks-fe-2.starrocks-fe-search.bd-starrocks.svc.cluster.local, listner id: -1

2026-03-13 09:04:35.298Z INFO (thrift-server-pool-95|2534) [DefaultCoordinator.prepareProfile():614] dispatch load job: a814d0fb-1ebb-11f1-a729-fa8d85fb3e18 to [10038065, 10038064, 10038147, 10038149, 10038150]

2026-03-13 09:04:35.317Z INFO (thrift-server-pool-95|2534) [DatabaseTransactionMgr.commitPreparedTransaction():546] transaction:[TransactionState. txn_id: 1594125, label: delete_a814d0fb-1ebb-11f1-a729-fa8d85fb3e18, db id: 10031145, table id list: 10034138, callback id: [-1, 10038279], coordinator: FE: starrocks-fe-2.starrocks-fe-search.bd-starrocks.svc.cluster.local, transaction status: COMMITTED, error replicas num: 0, unknown replicas num: 0, prepare time: 1773392675288, write end time: 1773392675312, allow commit time: 1773392675312, commit time: 1773392675312, finish time: -1, write cost: 24ms, reason: , attachment: com.starrocks.transaction.InsertTxnCommitAttachment@52880f50, partition commit info:[]] successfully committed

2026-03-13 09:04:35.320Z INFO (PUBLISH_VERSION|25) [PublishVersionDaemon.publishLakeTransactionAsync():473] start publish lake db:10031145 table:10034138 txn:1594125

2026-03-13 09:04:35.324Z INFO (PUBLISH_VERSION|25) [DatabaseTransactionMgr.finishTransaction():1358] finish transaction TransactionState. txn_id: 1594125, label: delete_a814d0fb-1ebb-11f1-a729-fa8d85fb3e18, db id: 10031145, table id list: 10034138, callback id: [-1, 10038279], coordinator: FE: starrocks-fe-2.starrocks-fe-search.bd-starrocks.svc.cluster.local, transaction status: VISIBLE, error replicas num: 0, unknown replicas num: 0, prepare time: 1773392675288, write end time: 1773392675312, allow commit time: 1773392675312, commit time: 1773392675312, finish time: 1773392675320, write cost: 24ms, wait for publish cost: 8ms, publish total cost: 8ms, total cost: 32ms, reason: , attachment: com.starrocks.transaction.InsertTxnCommitAttachment@52880f50, partition commit info:[] successfully

2026-03-13 09:04:35.332Z INFO (thrift-server-pool-95|2534) [FrontendServiceImpl.forward():1120] receive forwarded stmt 132 from FE: 10.244.136.13

2026-03-13 09:04:35.333Z INFO (thrift-server-pool-95|2534) [DatabaseTransactionMgr.beginTransaction():189] begin transaction: txn_id: 1594126 with label delete_a81bfcee-1ebb-11f1-a729-fa8d85fb3e18 from coordinator FE: starrocks-fe-2.starrocks-fe-search.bd-starrocks.svc.cluster.local, listner id: -1

2026-03-13 09:04:35.343Z INFO (thrift-server-pool-95|2534) [DefaultCoordinator.prepareProfile():614] dispatch load job: a81bfcee-1ebb-11f1-a729-fa8d85fb3e18 to [10038065, 10038064, 10038147, 10038149, 10038150]

2026-03-13 09:04:35.360Z INFO (thrift-server-pool-95|2534) [DatabaseTransactionMgr.commitPreparedTransaction():546] transaction:[TransactionState. txn_id: 1594126, label: delete_a81bfcee-1ebb-11f1-a729-fa8d85fb3e18, db id: 10031145, table id list: 10034138, callback id: [-1, 10038280], coordinator: FE: starrocks-fe-2.starrocks-fe-search.bd-starrocks.svc.cluster.local, transaction status: COMMITTED, error replicas num: 0, unknown replicas num: 0, prepare time: 1773392675333, write end time: 1773392675356, allow commit time: 1773392675356, commit time: 1773392675356, finish time: -1, write cost: 23ms, reason: , attachment: com.starrocks.transaction.InsertTxnCommitAttachment@56095f4e, partition commit info:[]] successfully committed

2026-03-13 09:04:35.365Z INFO (PUBLISH_VERSION|25) [PublishVersionDaemon.publishLakeTransactionAsync():473] start publish lake db:10031145 table:10034138 txn:1594126

2026-03-13 09:04:35.369Z INFO (PUBLISH_VERSION|25) [DatabaseTransactionMgr.finishTransaction():1358] finish transaction TransactionState. txn_id: 1594126, label: delete_a81bfcee-1ebb-11f1-a729-fa8d85fb3e18, db id: 10031145, table id list: 10034138, callback id: [-1, 10038280], coordinator: FE: starrocks-fe-2.starrocks-fe-search.bd-starrocks.svc.cluster.local, transaction status: VISIBLE, error replicas num: 0, unknown replicas num: 0, prepare time: 1773392675333, write end time: 1773392675356, allow commit time: 1773392675356, commit time: 1773392675356, finish time: 1773392675365, write cost: 23ms, wait for publish cost: 9ms, publish total cost: 9ms, total cost: 32ms, reason: , attachment: com.starrocks.transaction.InsertTxnCommitAttachment@56095f4e, partition commit info:[] successfully

2026-03-13 09:04:37.120Z INFO (thrift-server-pool-42|1738) [FrontendServiceImpl.loadTxnBegin():1178] receive txn begin request, db: sr_audit, tbl: audit, label: audit_20260313_090437_starrocks-fe-0_starrocks-fe-search_bd-starrocks_svc_cluster_local_9010, backend: 10.244.2.130

2026-03-13 09:04:37.120Z INFO (thrift-server-pool-42|1738) [StreamLoadMgr.beginLoadTaskFromBackend():175] STREAM_LOAD_TASK=10038281, msg={create load task}

2026-03-13 09:04:37.120Z INFO (thrift-server-pool-42|1738) [DatabaseTransactionMgr.beginTransaction():189] begin transaction: txn_id: 1594127 with label audit_20260313_090437_starrocks-fe-0_starrocks-fe-search_bd-starrocks_svc_cluster_local_9010 from coordinator BE: 10.244.2.130, listner id: 10038281

2026-03-13 09:04:37.120Z INFO (thrift-server-pool-42|1738) [StreamLoadTask.beginTxn():322] stream load audit_20260313_090437_starrocks-fe-0_starrocks-fe-search_bd-starrocks_svc_cluster_local_9010 channel_id 0 begin. db: sr_audit, tbl: audit, txn_id: 1594127

2026-03-13 09:04:37.124Z INFO (thrift-server-pool-45|1741) [DefaultCoordinator.<init>():258] Execution Profile: 5b4e9df6-6b95-1848-93f2-46a9a7fcc390

2026-03-13 09:04:46.954Z INFO (star_os_checkpoint_controller|133) [BDBJEJournal.getFinalizedJournalId():272] database names: 117237666

2026-03-13 09:04:46.954Z INFO (star_os_checkpoint_controller|133) [CheckpointController.runCheckpointControllerWithIds():152] checkpoint imageJournalId 117237665, logJournalId 0

2026-03-13 09:04:55.854Z INFO (thrift-server-pool-96|2568) [FrontendServiceImpl.forward():1120] receive forwarded stmt 0 from FE: 10.244.136.144

2026-03-13 09:04:55.876Z WARN (thrift-server-pool-96|2568) [QueryStatisticsInfo.getExecProgress():422] failed to get query progress, query_id: 9796b52e-1ebb-11f1-a066-9ac14c6ab7cf, status code: 404, msg: query id 9796b52e-1ebb-11f1-a066-9ac14c6ab7cf not found.

2026-03-13 09:04:55.886Z WARN (thrift-server-pool-96|2568) [QueryStatisticsInfo.getExecProgress():422] failed to get query progress, query_id: 9796b52e-1ebb-11f1-a066-9ac14c6ab7cf, status code: 404, msg: query id 9796b52e-1ebb-11f1-a066-9ac14c6ab7cf not found.

2026-03-13 09:04:56.742Z INFO (thrift-server-pool-96|2568) [FrontendServiceImpl.forward():1120] receive forwarded stmt 0 from FE: 10.244.136.144

2026-03-13 09:04:56.755Z WARN (thrift-server-pool-96|2568) [QueryStatisticsInfo.getExecProgress():422] failed to get query progress, query_id: 9796b52e-1ebb-11f1-a066-9ac14c6ab7cf, status code: 404, msg: query id 9796b52e-1ebb-11f1-a066-9ac14c6ab7cf not found.

2026-03-13 09:04:56.763Z WARN (thrift-server-pool-96|2568) [QueryStatisticsInfo.getExecProgress():422] failed to get query progress, query_id: 9796b52e-1ebb-11f1-a066-9ac14c6ab7cf, status code: 404, msg: query id 9796b52e-1ebb-11f1-a066-9ac14c6ab7cf not found.

2026-03-13 09:04:57.604Z INFO (thrift-server-pool-96|2568) [FrontendServiceImpl.forward():1120] receive forwarded stmt 0 from FE: 10.244.136.144

2026-03-13 09:04:57.613Z WARN (thrift-server-pool-96|2568) [QueryStatisticsInfo.getExecProgress():422] failed to get query progress, query_id: 9796b52e-1ebb-11f1-a066-9ac14c6ab7cf, status code: 404, msg: query id 9796b52e-1ebb-11f1-a066-9ac14c6ab7cf not found.

2026-03-13 09:04:57.619Z WARN (thrift-server-pool-96|2568) [QueryStatisticsInfo.getExecProgress():422] failed to get query progress, query_id: 9796b52e-1ebb-11f1-a066-9ac14c6ab7cf, status code: 404, msg: query id 9796b52e-1ebb-11f1-a066-9ac14c6ab7cf not found.

2026-03-13 09:04:58.207Z INFO (thrift-server-pool-96|2568) [FrontendServiceImpl.forward():1120] receive forwarded stmt 0 from FE: 10.244.136.144

2026-03-13 09:04:58.217Z WARN (thrift-server-pool-96|2568) [QueryStatisticsInfo.getExecProgress():422] failed to get query progress, query_id: 9796b52e-1ebb-11f1-a066-9ac14c6ab7cf, status code: 404, msg: query id 9796b52e-1ebb-11f1-a066-9ac14c6ab7cf not found.

2026-03-13 09:04:58.225Z WARN (thrift-server-pool-96|2568) [QueryStatisticsInfo.getExecProgress():422] failed to get query progress, query_id: 9796b52e-1ebb-11f1-a066-9ac14c6ab7cf, status code: 404, msg: query id 9796b52e-1ebb-11f1-a066-9ac14c6ab7cf not found.

2026-03-13 09:04:58.688Z INFO (thrift-server-pool-96|2568) [FrontendServiceImpl.forward():1120] receive forwarded stmt 0 from FE: 10.244.136.144

2026-03-13 09:04:58.697Z WARN (thrift-server-pool-96|2568) [QueryStatisticsInfo.getExecProgress():422] failed to get query progress, query_id: 9796b52e-1ebb-11f1-a066-9ac14c6ab7cf, status code: 404, msg: query id 9796b52e-1ebb-11f1-a066-9ac14c6ab7cf not found.

2026-03-13 09:04:58.706Z WARN (thrift-server-pool-96|2568) [QueryStatisticsInfo.getExecProgress():422] failed to get query progress, query_id: 9796b52e-1ebb-11f1-a066-9ac14c6ab7cf, status code: 404, msg: query id 9796b52e-1ebb-11f1-a066-9ac14c6ab7cf not found.

2026-03-13 09:04:59.186Z INFO (thrift-server-pool-96|2568) [FrontendServiceImpl.forward():1120] receive forwarded stmt 0 from FE: 10.244.136.144

2026-03-13 09:04:59.194Z WARN (thrift-server-pool-96|2568) [QueryStatisticsInfo.getExecProgress():422] failed to get query progress, query_id: 9796b52e-1ebb-11f1-a066-9ac14c6ab7cf, status code: 404, msg: query id 9796b52e-1ebb-11f1-a066-9ac14c6ab7cf not found.

2026-03-13 09:04:59.200Z WARN (thrift-server-pool-96|2568) [QueryStatisticsInfo.getExecProgress():422] failed to get query progress, query_id: 9796b52e-1ebb-11f1-a066-9ac14c6ab7cf, status code: 404, msg: query id 9796b52e-1ebb-11f1-a066-9ac14c6ab7cf not found.

2026-03-13 09:04:59.741Z INFO (thrift-server-pool-96|2568) [FrontendServiceImpl.forward():1120] receive forwarded stmt 0 from FE: 10.244.136.144

2026-03-13 09:04:59.750Z WARN (thrift-server-pool-96|2568) [QueryStatisticsInfo.getExecProgress():422] failed to get query progress, query_id: 9796b52e-1ebb-11f1-a066-9ac14c6ab7cf, status code: 404, msg: query id 9796b52e-1ebb-11f1-a066-9ac14c6ab7cf not found.

2026-03-13 09:04:59.758Z WARN (thrift-server-pool-96|2568) [QueryStatisticsInfo.getExecProgress():422] failed to get query progress, query_id: 9796b52e-1ebb-11f1-a066-9ac14c6ab7cf, status code: 404, msg: query id 9796b52e-1ebb-11f1-a066-9ac14c6ab7cf not found.

2026-03-13 09:05:00.365Z INFO (thrift-server-pool-96|2568) [FrontendServiceImpl.forward():1120] receive forwarded stmt 0 from FE: 10.244.136.144

2026-03-13 09:05:00.374Z WARN (thrift-server-pool-96|2568) [QueryStatisticsInfo.getExecProgress():422] failed to get query progress, query_id: 9796b52e-1ebb-11f1-a066-9ac14c6ab7cf, status code: 404, msg: query id 9796b52e-1ebb-11f1-a066-9ac14c6ab7cf not found.

2026-03-13 09:05:00.381Z WARN (thrift-server-pool-96|2568) [QueryStatisticsInfo.getExecProgress():422] failed to get query progress, query_id: 9796b52e-1ebb-11f1-a066-9ac14c6ab7cf, status code: 404, msg: query id 9796b52e-1ebb-11f1-a066-9ac14c6ab7cf not found.

2026-03-13 09:05:00.882Z INFO (TaskCleaner|103) [TaskManager.dropTasks():377] drop tasks:[]

2026-03-13 09:05:00.894Z INFO (com.starrocks.connector.hive.ConnectorTableMetadataProcessor|35) [ConnectorTableMetadataProcessor.refreshCatalogTable():127] Starting to refresh tables from cl_hive:hive metadata cache

2026-03-13 09:05:00.895Z INFO (com.starrocks.connector.hive.ConnectorTableMetadataProcessor|35) [ConnectorTableMetadataProcessor.refreshCatalogTable():157] refresh connector metadata cl_hive finished

2026-03-13 09:05:00.927Z INFO (thrift-server-pool-96|2568) [FrontendServiceImpl.forward():1120] receive forwarded stmt 0 from FE: 10.244.136.144

2026-03-13 09:05:00.929Z INFO (AutoStatistic|28) [StatisticAutoCollector.runJobs():88] auto collect statistic on analyze job[10038282] start

2026-03-13 09:05:00.936Z WARN (thrift-server-pool-96|2568) [QueryStatisticsInfo.getExecProgress():422] failed to get query progress, query_id: 9796b52e-1ebb-11f1-a066-9ac14c6ab7cf, status code: 404, msg: query id 9796b52e-1ebb-11f1-a066-9ac14c6ab7cf not found.

2026-03-13 09:05:00.938Z INFO (DynamicPartitionScheduler|47) [DynamicPartitionScheduler.findSchedulableTables():491] finished to find all schedulable tables, cost: 0ms, dynamic partition tables: {}, ttl partition tables: {dmp=[adt_ip_country_source_ljx, adt_ip_label_num, adt_ip_country_active_last_day_7, adt_ip_bid_floor, adt_ip_country_source_ljx_2, adt_ip_country_source, adt_ip_label_hll, adt_ua_ip_correlation, adt_ip_country_active_last_day_15], _statistics_=[loads_history, task_run_history], monitor=[log], dms=[rtb_device_af_revenue_country, rtb_device_af_revenue, okspin_callback_active, ssp_event_session, rtb_ml_shadding_base, rtb_ml_okspin_left, rtb_ml_billing_right], sr_audit=[audit]}, scheduler enabled: true, scheduler interval: 600s

插入输入的 fe 日志如下

at com.starrocks.qe.SimpleExecutor.executeDDL(SimpleExecutor.java:146) ~[starrocks-fe.jar:?]

at com.starrocks.scheduler.history.TableKeeper.createTable(TableKeeper.java:96) ~[starrocks-fe.jar:?]

at com.starrocks.scheduler.history.TableKeeper.run(TableKeeper.java:68) ~[starrocks-fe.jar:?]

at com.starrocks.scheduler.history.TableKeeper$TableKeeperDaemon.runAfterCatalogReady(TableKeeper.java:222) ~[starrocks-fe.jar:?]

at com.starrocks.common.util.FrontendDaemon.runOneCycle(FrontendDaemon.java:72) ~[starrocks-fe.jar:?]

at com.starrocks.common.util.Daemon.run(Daemon.java:98) ~[starrocks-fe.jar:?]

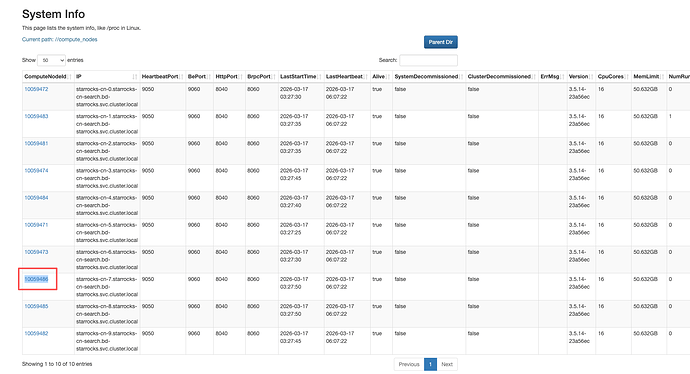

Caused by: com.starrocks.common.DdlException: Table creation timed out. unfinished replicas(1/1): 10030262(starrocks-cn-5.starrocks-cn-search.bd-starrocks.svc.cluster.local) timeout=60s

You can increase the timeout by increasing the config "tablet_create_timeout_second" and try again.

To increase the config "tablet_create_timeout_second" (currently 60), run the following command:

admin set frontend config("tablet_create_timeout_second"="120")

or add the following configuration to the fe.conf file and restart the process:

tablet_create_timeout_second=120

at com.starrocks.task.TabletTaskExecutor.waitForFinished(TabletTaskExecutor.java:418) ~[starrocks-fe.jar:?]

at com.starrocks.task.TabletTaskExecutor.sendCreateReplicaTasksAndWaitForFinished(TabletTaskExecutor.java:299) ~[starrocks-fe.jar:?]

at com.starrocks.task.TabletTaskExecutor.buildPartitionsSequentially(TabletTaskExecutor.java:109) ~[starrocks-fe.jar:?]

at com.starrocks.server.LocalMetastore.buildPartitions(LocalMetastore.java:1940) ~[starrocks-fe.jar:?]

at com.starrocks.server.OlapTableFactory.createTable(OlapTableFactory.java:768) ~[starrocks-fe.jar:?]

at com.starrocks.server.LocalMetastore.createTable(LocalMetastore.java:840) ~[starrocks-fe.jar:?]

at com.starrocks.server.MetadataMgr.createTable(MetadataMgr.java:300) ~[starrocks-fe.jar:?]

at com.starrocks.qe.DDLStmtExecutor$StmtExecutorVisitor.lambda$visitCreateTableStatement$4(DDLStmtExecutor.java:298) ~[starrocks-fe.jar:?]

at com.starrocks.common.ErrorReport.wrapWithRuntimeException(ErrorReport.java:118) ~[starrocks-fe.jar:?]

at com.starrocks.qe.DDLStmtExecutor$StmtExecutorVisitor.visitCreateTableStatement(DDLStmtExecutor.java:297) ~[starrocks-fe.jar:?]

at com.starrocks.qe.DDLStmtExecutor$StmtExecutorVisitor.visitCreateTableStatement(DDLStmtExecutor.java:203) ~[starrocks-fe.jar:?]

at com.starrocks.sql.ast.CreateTableStmt.accept(CreateTableStmt.java:345) ~[starrocks-fe.jar:?]

at com.starrocks.sql.ast.AstVisitor.visit(AstVisitor.java:101) ~[starrocks-fe.jar:?]

at com.starrocks.qe.DDLStmtExecutor.execute(DDLStmtExecutor.java:188) ~[starrocks-fe.jar:?]

at com.starrocks.qe.SimpleExecutor.executeDDL(SimpleExecutor.java:141) ~[starrocks-fe.jar:?]

... 5 more

2026-03-13 07:52:08.997Z INFO (TableKeeper|100) [LocalMetastore.buildPartitions():1938] start to build 1 partitions sequentially for table _statistics_.loads_history with 3 replicas

2026-03-13 07:52:08.998Z INFO (TableKeeper|100) [TabletTaskExecutor.buildCreateReplicaTasks():223] build create replica tasks for index index id: 10030269; index state: NORMAL; shardGroupId: 286003; row count: 0; tablets size: 3; visibleTxnId: 0; tablets: [tablet: id=10030271, tablet: id=10030272, tablet: id=10030273, ]; db 10030240 table 10030268 partition partitionId: 10030270; partitionName: $shadow_automatic_partition_10030270; parentPartitionId: 10030267; shardGroupId: 286003; isImmutable: false; baseIndex: index id: 10030269; index state: NORMAL; shardGroupId: 286003; row count: 0; tablets size: 3; visibleTxnId: 0; tablets: [tablet: id=10030271, tablet: id=10030272, tablet: id=10030273, ]; ; rollupCount: 0; visibleVersion: 1; visibleVersionTime: 1773388328988; committedVersion: 1; dataVersion: 1; committedDataVersion: 1; versionEpoch: 410101280120242176; versionTxnType: TXN_NORMAL; storageDataSize: 0; storageRowCount: 0; storageReplicaCount: 3; bucketNum: 3;

2026-03-13 07:52:08.998Z INFO (TableKeeper|100) [TabletTaskExecutor.buildPartitionsSequentially():107] build partitions sequentially, send task one by one, all tasks timeout 60s

2026-03-13 07:52:14.166Z INFO (global_state_checkpoint_controller|282) [BDBJEJournal.getFinalizedJournalId():272] database names: 20319174

2026-03-13 07:52:14.166Z INFO (global_state_checkpoint_controller|282) [CheckpointController.runCheckpointControllerWithIds():152] checkpoint imageJournalId 20319173, logJournalId 0

ui截图是测试了多次这是其中 2 次的截图, 都会有个卡主的 statistics 查询,

有些语句进度都显示 100%但是一直不结束