详细的物化视图建表语句

CREATE MATERIALIZED VIEW IF NOT EXISTS dws_k12soe_summary_mv_1h_v2

PARTITION BY str2date (dt,’%Y-%m-%d’

)

REFRESH ASYNC

START (‘2024-01-01 09:00:00’) EVERY (INTERVAL 1 hour)

AS

SELECT dt,

SUM(CASE

WHEN app_key = 'xx'

AND msg LIKE '%测评完成%'

AND msg NOT LIKE '%服务端测评%' THEN 1

ELSE 0 END) AS soe_success_cnt,

SUM(CASE

WHEN app_key = 'xxxx'

AND msg LIKE '%失败%'

AND msg NOT LIKE '%服务端测评%'

AND msg NOT LIKE '%测评取消失败%'

AND msg NOT LIKE '%测评结束失败%'

AND msg NOT LIKE '%初始化语音测评失败%'

AND msg NOT LIKE '%联系客服%'

AND msg NOT LIKE '%系统错误,请联系客服为您处理(err:999)%'

AND (action = 'xxxx' OR action = 'xx') THEN 1

ELSE 0 END) AS soe_fail_cnt,

SUM(CASE

WHEN level = 'error'

AND app_key = 'xxx'

AND msg LIKE '%首次服务端测评%'

AND get_json_object(msg, '$.success') = 'success' THEN 1

ELSE 0 END) AS soe_first_success_cnt,

SUM(CASE

WHEN app_key = 'xxx'

AND msg LIKE '%测评成功%'

AND msg LIKE '%服务端测评%' THEN 1

ELSE 0 END) AS server_soe_success_cnt,

SUM(CASE

WHEN app_key = 'xxxx'

AND msg LIKE '%失败%'

AND msg LIKE '%服务端测评%' THEN 1

ELSE 0 END) AS server_soe_fail_cnt,

SUM(CASE

WHEN level = 'error'

AND version = '3.7.5'

AND msg LIKE '%录音失败%'

AND get_json_object(msg, '$.offlineEvaluating') = '0' THEN 1

ELSE 0 END) AS default_soe_fail_cnt,

SUM(CASE

WHEN level = 'error'

AND version = '3.7.5'

AND msg LIKE '%录音失败%'

AND get_json_object(msg, '$.offlineEvaluating') = '1' THEN 1

ELSE 0 END) AS default_server_soe_fail_cnt,

COUNT(DISTINCT CASE WHEN app_key = 'xxxx' THEN uid ELSE NULL END) AS soe_people_cnt,

SUM(CASE

WHEN app_key = 'xxxx'

AND msg LIKE '%测评完成%'

AND msg NOT LIKE '%服务端测评%'

AND msg LIKE '%xxxx%' THEN 1

ELSE 0 END) AS clio_success_cnt,

SUM(CASE

WHEN app_key = 'xxxx'

AND msg LIKE '%失败%'

AND msg NOT LIKE '%服务端测评%'

AND msg NOT LIKE '%测评取消失败%'

AND msg NOT LIKE '%测评结束失败%'

AND msg NOT LIKE '%初始化语音测评失败%'

AND msg NOT LIKE '%系统错误,请联系客服为您处理(err:999)%'

AND (action = 'xxxxx' OR action = 'SOELog')

AND get_json_object(get_json_object(msg, '$.info'), '$.engineType') = '3' THEN 1

ELSE 0 END) AS clio_fail_cnt,

SUM(CASE

WHEN app_key = 'xxx'

AND msg LIKE '%测评完成%'

AND msg NOT LIKE '%服务端测评%'

AND msg LIKE '%xxxx%' THEN 1

ELSE 0 END) AS stkouyu_success_cnt,

SUM(CASE

WHEN app_key = 'xxx'

AND msg LIKE '%失败%'

AND msg NOT LIKE '%测评取消失败%'

AND msg NOT LIKE '%测评结束失败%'

AND msg NOT LIKE '%初始化语音测评失败%'

AND msg NOT LIKE '%系统错误,请联系客服为您处理(err:999)%'

AND (action = 'xxx' OR action = 'xxxx')

AND get_json_object(get_json_object(msg, '$.info'), '$.engineType') = '0' THEN 1

ELSE 0 END) AS stkouyu_fail_cnt,

SUM(CASE

WHEN app_key = 'unitest'

AND msg LIKE '%测评完成%'

AND msg NOT LIKE '%服务端测评%'

AND msg LIKE '%xxxx%' THEN 1

ELSE 0 END) AS zhiyan_success_cnt,

SUM(CASE

WHEN app_key = 'xxx'

AND msg LIKE '%失败%'

AND msg NOT LIKE '%测评取消失败%'

AND msg NOT LIKE '%测评结束失败%'

AND msg NOT LIKE '%初始化语音测评失败%'

AND msg NOT LIKE '%系统错误,请联系客服为您处理(err:999)%'

AND (action = 'xxxx' OR action = 'xxxx')

AND get_json_object(get_json_object(msg, '$.info'), '$.engineType') = '5' THEN 1

ELSE 0 END) AS zhiyan_fail_cnt,

SUM(CASE

WHEN app_key = 'unitest'

AND msg LIKE '%测评完成%'

AND msg NOT LIKE '%服务端测评%'

AND msg NOT LIKE '%xxxx%'

AND msg NOT LIKE '%xxxx%'

AND msg NOT LIKE '%xxxxx%'

AND msg LIKE '%xxxxx%' THEN 1

ELSE 0 END) AS offline_success_cnt,

SUM(CASE

WHEN app_key = 'unitest'

AND msg LIKE '%测评完成%'

AND msg NOT LIKE '%服务端测评%'

AND msg LIKE '%xxxxx%' THEN 1

ELSE 0 END) AS chisheng_success_cnt,

SUM(CASE

WHEN app_key = 'unitest'

AND msg LIKE '%失败%'

AND msg NOT LIKE '%服务端测评%'

AND msg NOT LIKE '%测评取消失败%'

AND msg NOT LIKE '%测评结束失败%'

AND msg NOT LIKE '%初始化语音测评失败%'

AND msg NOT LIKE '%系统错误,请联系客服为您处理(err:999)%'

AND (action = 'xx' OR action = 'xxx')

AND get_json_object(get_json_object(msg, '$.info'), '$.engineType') = '1' THEN 1

ELSE 0 END) AS chisheng_fail_cnt,

SUM(CASE

WHEN action = 'xxx-xx-xx'

AND channel = 'xx-xx-score'

AND device_id = 'xx-voice-xx'

THEN 1

ELSE 0 END) AS optimus_score_count,

SUM(CASE

WHEN action = 'xxxxx'

AND channel = 'xxx'

AND device_id = 'xxxx'

AND msg LIKE '%\"result\":true%' THEN 1

ELSE 0 END) AS optimus_score_success_cnt,

SUM(CASE

WHEN level = 'error'

AND action = '暂无'

AND get_json_object(msg, '$.offlineEvaluating') = '0'

AND get_json_object(msg, '$.isErrBeforeStart') = 'true' THEN 1

ELSE 0 END) AS unsensible_fail_cnt

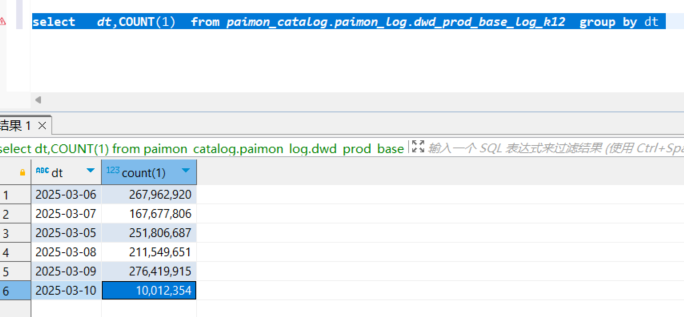

FROM paimon_catalog.paimon_log.dwd_prod_base_log_k12

WHERE dt = concat(current_date)-- 仅处理当前日期的数据

GROUP BY dt;

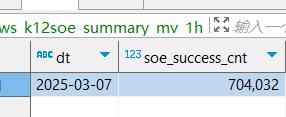

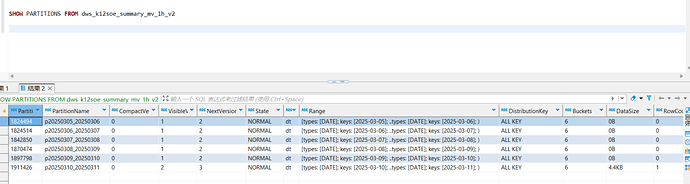

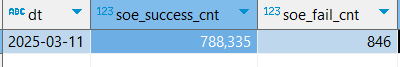

始终在覆盖数据。求加急

始终在覆盖数据。求加急